The CFO still closes the books in 22 days. The sales team still hits quota at 24%. The AP team still works the exact same way they did in 2022.

You have spent millions on AI. You bought the licenses. You paid for the tokens. Your leadership team talks non-stop about being “AI-first.”

So what exactly did you buy?

If you ask the people doing the actual work what has changed in their day-to-day, the answer is almost always some version of nothing or poor results.

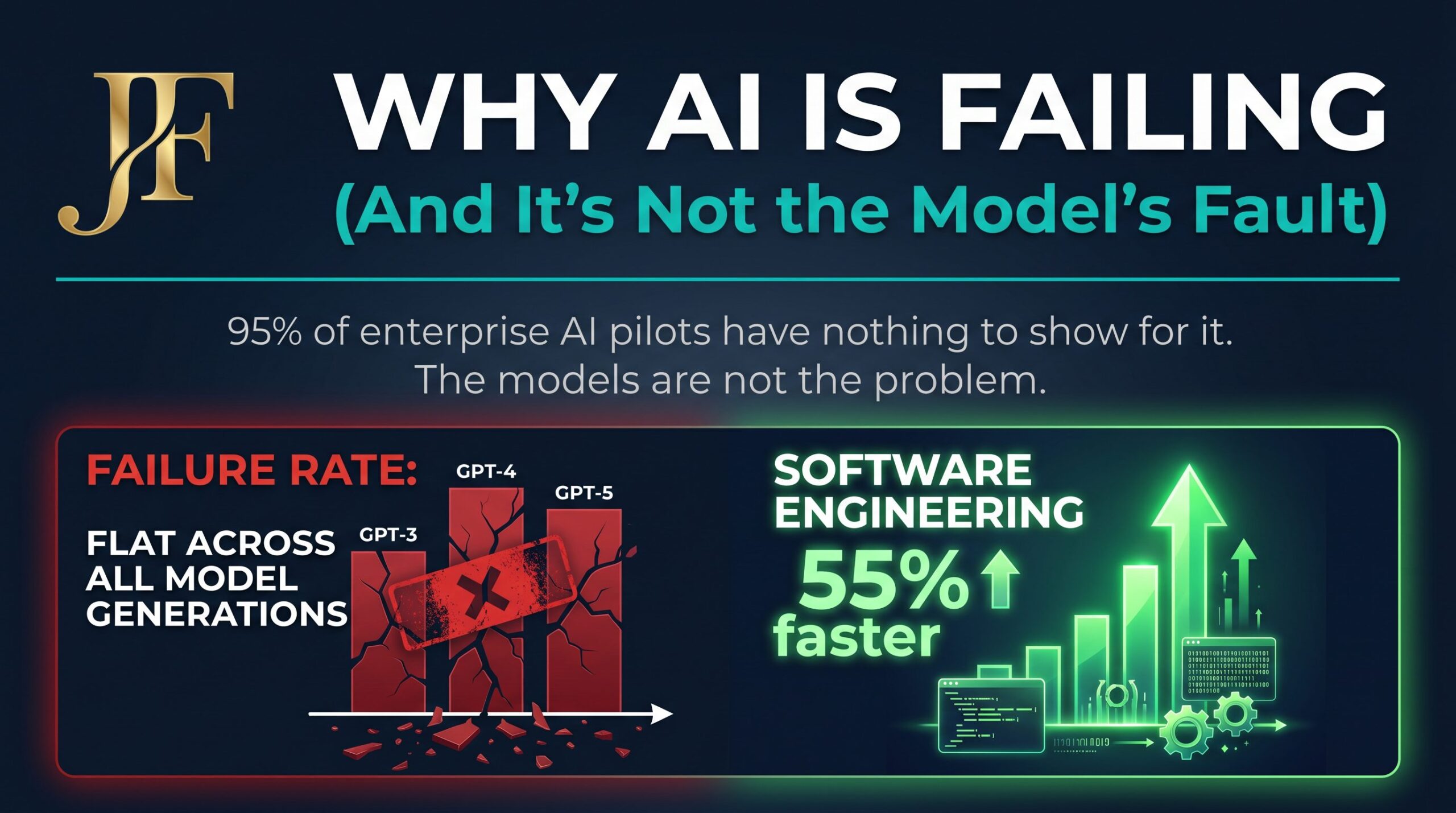

This isn’t just a feeling. It’s a statistical reality. And the uncomfortable truth is that the AI models are not the problem.

The Scary Numbers

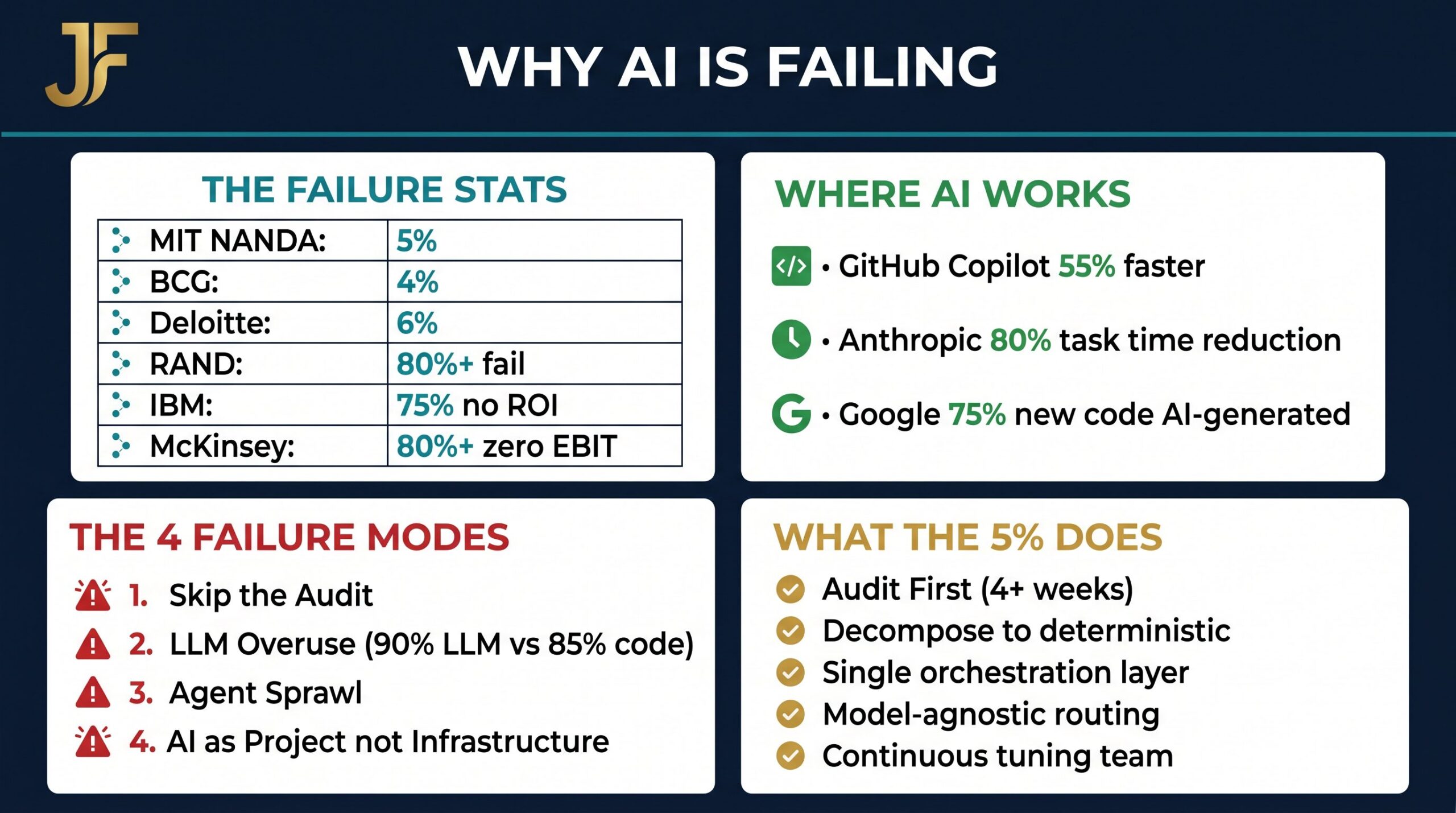

Every major consulting firm and research institution has run the numbers on enterprise AI adoption. They all converge on the exact same, brutal reality: the vast majority of enterprise AI pilots fail to deliver any measurable business value.

| Source | Finding |

|---|---|

| MIT NANDA GenAI Divide | Only 5% of enterprise AI pilots deliver measurable value |

| BCG | 4% of enterprise AI deployments meet ROI targets |

| Deloitte | 6% of AI programs show meaningful business impact |

| RAND Corporation | 80%+ of enterprise AI projects fail outright |

| IBM | 75% of AI deployments report no measurable ROI |

| McKinsey | 80%+ of companies report zero EBIT impact from AI |

Here is the kicker: that failure rate has been flat through GPT-3, GPT-4, GPT-5, Claude 2 through 4.7, and Gemini 1 through 3. Every model generation gets smarter, faster, and cheaper. Yet the enterprise failure rate remains identical.

The model is not the bottleneck. As we explored in Issue #56 on the Micro-Productivity Trap, the bottleneck is the process underneath the model.

The One Place AI Is Actually Working

There is one group of people for whom AI is working really well at scale right now: software engineers.

The productivity gains in engineering are staggering:

- GitHub’s 2024 study clocked Copilot users at 55% faster on real tasks.

- Anthropic’s internal study (Aug 2025) across 132 engineers showed AI cuts developer task completion time by roughly 80%.

- Google reported at the start of 2026 that 75% of new code is AI-generated and engineer-approved (up from 30% in April 2025).

Why does it work so well for engineers, but fail for finance, sales, and operations? Because engineering work has four properties that almost no other enterprise function has:

| Property | Engineering | Finance / Sales / Ops |

|---|---|---|

| Bounded | A function takes inputs and returns outputs. Scope lives inside a file. | Spans multiple systems, banks, ERPs, and undocumented exceptions. |

| Checkable | Compilers tell you in milliseconds if it works. Instant feedback loop. | “The close was clean” takes two senior accountants two days to verify. |

| Structured | Code lives in version control with deterministic build pipelines. | The “process” is documented in an SOP that doesn’t match reality. |

| Verifiable | A pull request is a discrete artifact a human can review in 10 minutes. | Output is a judgment call that requires domain expertise to validate. |

Why Every Other Function Is Flat

Contrast software engineering with a finance close. Finance involves AP, AR, intercompany reconciliations, FX, accruals, and exception handling. It spans NetSuite, Concur, three banks, two legacy ERPs, a custom intake form, and a Slack channel where the controller flags “weird stuff.”

The “process” is documented in an SOP that doesn’t match what actually happens. Pointing a generic LLM at this mess gives you negative ROI. The operator who used to do the work in 30 minutes now spends 30 minutes doing the work, plus another 30 minutes correcting the AI’s hallucinations.

The 4 Failure Modes of Enterprise AI

When you look at the 95% of AI pilots that fail, they almost always trace back to the same four mistakes.

1. They Skip the Audit

The single biggest predictor of failure is building before understanding the actual workflow. Companies build for the documented SOP, not reality. But the actual workflow always includes the “I always check this spreadsheet first” step, or the 17 unwritten exception types. We call this the conformance gap. If you build for the SOP, you automate 70% of the volume and break on the 30% of exceptions — creating more work for the team.

2. They Throw Everything at the LLM

Once you have an LLM, every problem looks LLM-shaped. Teams build architectures that are 90% LLM calls and 10% code. The system is slow, expensive, and hallucinates 10% of the time. Production systems that actually work are the opposite: 85% deterministic code and 15% LLM. The LLM only goes where human judgment is absolutely required.

3. Agent Sprawl

This is the silent killer that shows up in month six. Every employee with AI access builds their own bespoke agent. You end up with 100 disconnected systems, no shared memory, and no shared knowledge of how the company runs. When an API changes or a model deprecates, 50 agents break in production, and IT spends half its time playing janitor for workflows nobody owns.

4. Treating AI as a Project, Not Infrastructure

Traditional software stays built. AI does not. Models deprecate, pricing changes, and rate limits tighten. If you budget AI as a “plan, build, ship, move on” project, it will break. The deployments that pay off treat AI as continuously evolving infrastructure with a dedicated team that owns ongoing optimization.

What the 5% Does Differently

The companies that actually see EBITDA impact from AI do the exact opposite of the failure modes above. Based on analysis from Varick Agents / @vasuman and corroborated by Bain, BCG, and McKinsey research:

| Practice | What It Means in Practice |

|---|---|

| 1. Audit before building | Spend 4+ weeks mapping the actual workflow and the conformance gap before touching a model. |

| 2. Decompose the work | Break the process down until most of it is deterministic code, using LLMs only for specific judgment calls. |

| 3. Single orchestration layer | One platform where finance, sales, and ops agents share context — killing agent sprawl on arrival. |

| 4. Stay model-agnostic | Route tasks to the best-fit model, absorbing deprecations without breaking the workflow. |

| 5. Treat it as infrastructure | Assign a tuning team that monitors quality and swaps models continuously — not a project team with a go-live date. |

The Operator’s Perspective

This diagnosis maps exactly to the consulting motion used at NetSync when working with enterprise customers. You cannot just buy a model and expect transformation. You have to audit the workflow, architect the solution, build the orchestration, and continuously tune the infrastructure.

People. Process. Technology.

Even the AI labs are quietly conceding this point. Selling the model alone isn’t enough. Selling the runtime isn’t enough. You need the model, the runtime, and the operational fieldwork to embed it into the enterprise.

The “models got smart” chapter is over. The bottleneck is the process. The next decade belongs to the companies that build the operational layer underneath the models — not the ones who pour frontier AI onto a mess of broken systems.

Your AI Transformation Action Plan

Here are three steps you can take this week to stop failing and start transforming:

- Audit one workflow: Before you write a single prompt, sit with the operators and map the conformance gap between the SOP and reality.

- Map pattern vs. judgment: Break the workflow down. What is deterministic (code) and what requires actual human judgment (LLM)?

- Build a tuning function: Stop treating AI as a project with a go-live date. Assign an owner to continuously monitor, tune, and swap models as the landscape shifts.

Is your AI program failing because of the model — or because of the process underneath it?

Related AI Pathfinder Articles

If you found this valuable, these related issues from the AI Pathfinder series go deeper on the tools and infrastructure powering AI transformation in 2026:

- Issue #56 — Why AI Pilots Fail: The Micro-Productivity Trap Explained

- Issue #54 — The Great AI Realignment: Microsoft, OpenAI & the Geopolitical Firewall

- Issue #55 — OpenAI on AWS: GPT-5.5, Codex & AI Agents on Bedrock

- Issue #52 — GPT-5.5: Is This the New Leading AI Model?

Frequently Asked Questions

Why do most enterprise AI pilots fail?

Most enterprise AI pilots fail not because the models are bad, but because of four systemic mistakes: skipping the workflow audit, over-relying on LLMs for deterministic work, allowing agent sprawl, and treating AI as a one-time project rather than continuously evolving infrastructure.

What is the “conformance gap” in AI deployments?

The conformance gap is the difference between the documented SOP and what actually happens in a workflow. Most AI deployments are built against the documented process, not the real one — which means they automate 70% of volume and break on the 30% of exceptions that matter most.

Why is AI working for software engineers but not for other functions?

Engineering work is bounded, checkable, structured, and verifiable — four properties that make AI leverage enormous. Finance, sales, and operations work typically lacks all four, which is why generic LLM deployments in those functions produce negative ROI.

What is agent sprawl and why is it dangerous?

Agent sprawl occurs when every employee builds their own bespoke AI agent, resulting in 100+ disconnected systems with no shared memory or governance. When a model deprecates or an API changes, dozens of agents break simultaneously and IT spends its time playing janitor for workflows nobody owns.

What do the 5% of successful enterprise AI programs do differently?

They audit before building, decompose work into deterministic code vs. LLM judgment calls, build a single orchestration layer, stay model-agnostic, and treat AI as continuously evolving infrastructure with a dedicated tuning team — not a project with a go-live date.

References

- Varick Agents / @vasuman on X — “If AI is so great, why isn’t it working?”

- Jason Fleagle on LinkedIn — Why AI Is Failing (And It’s Not the AI Model’s Fault)

- NetSync — Enterprise AI Consulting and Implementation

About Jason Fleagle

Jason Fleagle is a Chief AI Officer, AI Architect, and the founder of Catalyst Brand Group. He specializes in revenue-first, real-world AI deployments and helps enterprises move from AI experimentation to measurable business transformation. He works with enterprise customers through NetSync to audit workflows, architect AI solutions, and build the operational infrastructure that actually delivers ROI.