AI Pathfinder | Issue #52 — Published April 23, 2026

One engineer at NVIDIA who had early access to the model went as far as to say: “Losing access to GPT-5.5 feels like I’ve had a limb amputated.”

That’s not hype. That’s a signal. OpenAI has officially released GPT-5.5, and it represents a fundamental shift in how we interact with AI. It doesn’t just answer questions: it plans, uses tools, checks its work, navigates ambiguity, and keeps going until the task is finished.

This may be the clearest signal yet that we are firmly in the Agentic Era — where the bottleneck is no longer writing or creating, but reviewing, orchestrating, and governing AI tools.

Watch: GPT-5.5 Video Overview

What Is GPT-5.5? The Agentic Shift Explained

GPT-5.5 is OpenAI’s smartest and most intuitive model to date. It excels at writing and debugging code, researching online, analyzing data, creating documents, and operating software. Crucially, it delivers this step up in intelligence without compromising on speed — GPT-5.5 matches GPT-5.4 per-token latency in real-world serving, while performing at a much higher level of intelligence and using significantly fewer tokens to complete the same tasks.

It is rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex. For API developers, gpt-5.5 will soon be available at $5 per 1M input tokens and $30 per 1M output tokens, with a 1M context window.

GPT-5.5 Benchmark Results: The Numbers That Matter

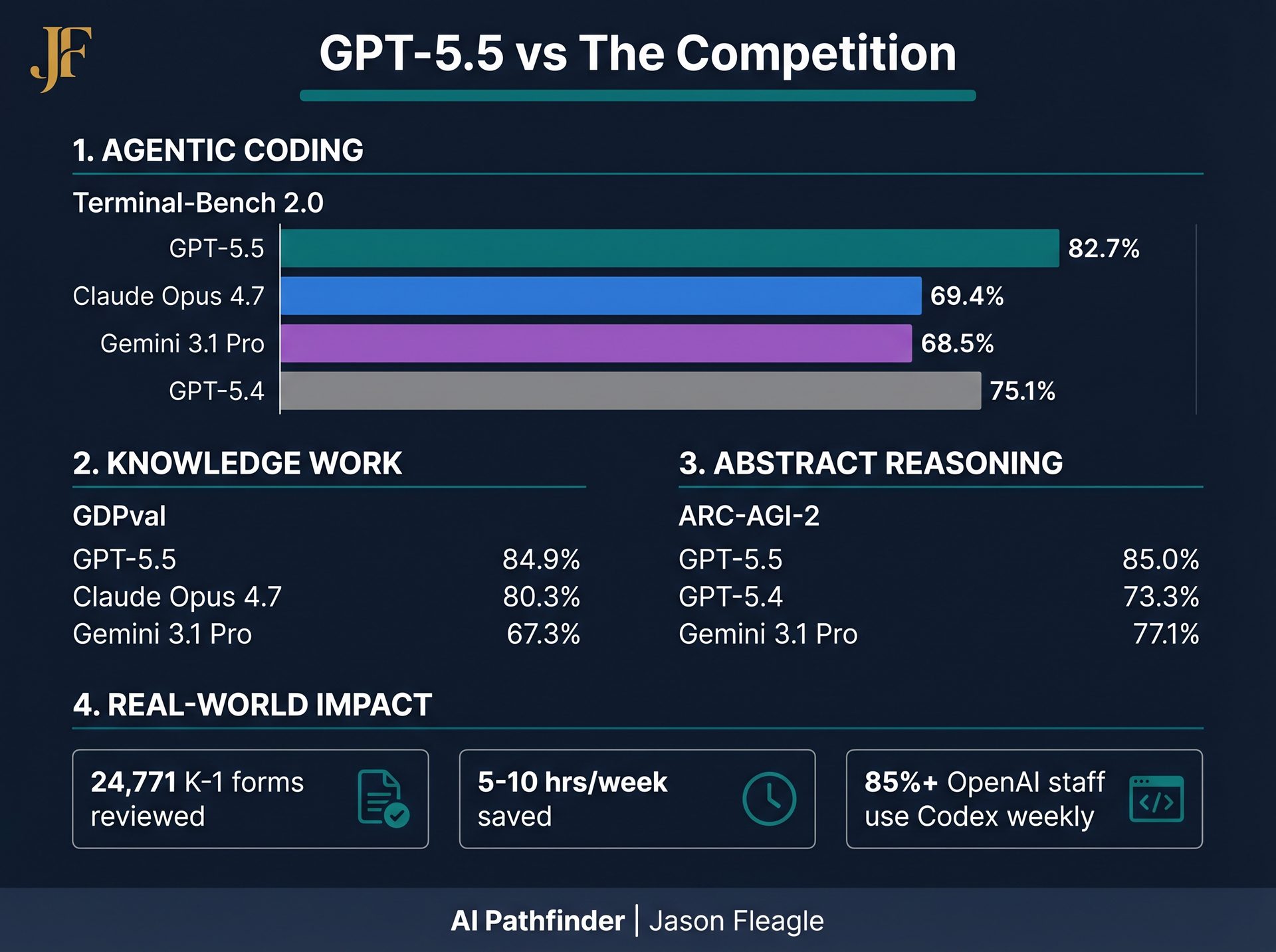

The benchmark data confirms what early testers are feeling. GPT-5.5 establishes a new state-of-the-art across multiple domains when compared to GPT-5.4, Claude Opus 4.7, and Gemini 3.1 Pro:

| Benchmark | GPT-5.5 | GPT-5.4 | Claude Opus 4.7 | Gemini 3.1 Pro |

|---|---|---|---|---|

| Terminal-Bench 2.0 (Agentic Coding) | 82.7% | 75.1% | 69.4% | 68.5% |

| SWE-Bench Pro (GitHub Issues) | 58.6% | 57.7% | 64.3% | 54.2% |

| GDPval (Knowledge Work) | 84.9% | 83.0% | 80.3% | 67.3% |

| OSWorld-Verified (Computer Use) | 78.7% | 75.0% | 78.0% | — |

| ARC-AGI-2 (Abstract Reasoning) | 85.0% | 73.3% | 83.3% | 77.1% |

| CyberGym (Cybersecurity) | 81.8% | 79.0% | 73.1% | — |

Why GPT-5.5 Matters: The Operator Shift

Here is the opinionated take: The bottleneck is no longer writing. It’s reviewing, orchestrating, and governing. And this is especially true for AI agentic use-cases and workflows.

Dan Shipper, Founder and CEO of Every, described GPT-5.5 as “the first coding model I’ve used that has serious conceptual clarity.”

It doesn’t just write code — it understands why something is failing, where the fix needs to land, and what else in the codebase would be affected. This is the shift from an AI assistant to an AI operator. When the AI can hold context across large systems and reason through ambiguous failures, your job changes from “prompt engineer” to “system architect.”

This mirrors the trajectory we covered in our breakdown of OpenAI Codex Desktop Agent and the broader agentic coding movement. GPT-5.5 is the engine that makes those workflows dramatically more capable.

5 Real-World GPT-5.5 Use Cases You Can Try Today

Stop testing AI with trivia. Here are five immediate, practical workflows you can hand off to GPT-5.5 today:

1. Document Review (The K-1 Tax Form Workflow)

Use it to review massive document sets. OpenAI’s finance team used Codex to review 24,771 K-1 tax forms (71,637 pages), accelerating the task by two weeks compared to the prior year. If your team processes large volumes of contracts, compliance docs, or financial statements, this is your first test.

2. Automated Messaging Routing Agent

Build a scoring and risk framework. OpenAI’s Comms team validated an automated Slack agent so low-risk speaking requests could be handled automatically while higher-risk requests route to human review. This is a direct template for any team managing inbound requests at volume.

3. Weekly Business Report Automation

Stop manually pulling data. On OpenAI’s Go-to-Market team, an employee automated generating weekly business reports, saving 5-10 hours a week. Connect your data sources, define your report structure, and let GPT-5.5 in Codex handle the rest.

4. Codebase Re-architecture and Branch Merges

Pietro Schirano, CEO of MagicPath, saw GPT-5.5 merge a branch with hundreds of frontend and refactor changes into a main branch that had also changed substantially — resolving the work in one shot in about 20 minutes. A senior engineer would have taken days.

5. Research Analysis and Data Workflows

Derya Unutmaz, an immunology professor at the Jackson Laboratory, used GPT-5.5 Pro to analyze a gene-expression dataset with 62 samples and nearly 28,000 genes, producing a detailed research report that surfaced key questions and insights — work he said would have taken his team months. This applies equally to business intelligence, market research, and competitive analysis.

The Scientific Research Frontier

GPT-5.5 is showing meaningful gains on scientific and technical research workflows, which require exploring an idea, gathering evidence, testing assumptions, interpreting results, and deciding what to try next. It achieved leading performance on BixBench (bioinformatics) and a clear improvement on GeneBench. In one internal test, it helped discover a new proof about Ramsey numbers in combinatorics — later verified in Lean.

Brandon White, Co-Founder & CEO at Axiom Bio: “If OpenAI keeps cooking like this, the foundations of drug discovery will change by the end of the year.”

Safety, Cybersecurity, and Operator Responsibility

With greater capability comes greater responsibility. OpenAI is treating the biological/chemical and cybersecurity capabilities of GPT-5.5 as “High” under their Preparedness Framework. They are deploying stricter classifiers for potential cyber risk and making cyber-permissive models available through a “Trusted Access for Cyber” program for verified defenders.

The honest take: as these models become more autonomous, the responsibility for governance falls squarely on the operators and teams deploying them. This connects directly to the governance frameworks we discussed in our analysis of Anthropic Opus 4.7 — the pattern is consistent across all frontier labs.

For a deeper look at how AI is being deployed in cybersecurity specifically, see our earlier coverage of GPT-5.4-Cyber and Anthropic Mythos.

Your GPT-5.5 Operator Action Plan

Here is your 3-step action plan for the week:

- Upgrade and Execute: Upgrade your Codex or ChatGPT tier to access GPT-5.5. Don’t just chat with it — run one real, multi-step workflow this week (like a complex data analysis or a codebase refactor).

- Map Your Knowledge Work: Identify your highest-volume repetitive knowledge work (e.g., weekly reports, document reviews, request routing). These are your prime targets for agentic automation.

- Build Your Governance Layer: Before you scale these agents, build a governance framework. Define what requires human review (high-risk) and what can be fully automated (low-risk). The model’s autonomy demands it.

Frequently Asked Questions About GPT-5.5

What is GPT-5.5 and how is it different from GPT-5.4?

GPT-5.5 is OpenAI’s latest and most capable model, released April 23, 2026. Unlike GPT-5.4, it is designed for agentic, multi-step tasks — it can plan, use tools, check its own work, and execute long-horizon workflows without constant human prompting. It achieves 82.7% on Terminal-Bench 2.0 vs. GPT-5.4’s 75.1%, and 85.0% on ARC-AGI-2 vs. 73.3% — while matching GPT-5.4’s per-token latency.

Who has access to GPT-5.5?

GPT-5.5 is rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex. GPT-5.5 Pro is available to Pro, Business, and Enterprise users. API access is coming very soon at $5 per 1M input tokens and $30 per 1M output tokens.

What are the best use cases for GPT-5.5 in business?

The strongest immediate use cases are: large document review workflows, automated request routing agents, weekly report automation, complex codebase refactoring, and research data analysis. OpenAI’s own teams are saving 5-10 hours per week per employee using these workflows in Codex.

How does GPT-5.5 compare to Claude Opus 4.7 and Gemini 3.1 Pro?

GPT-5.5 leads on Terminal-Bench 2.0 (82.7% vs. Claude’s 69.4% and Gemini’s 68.5%), GDPval knowledge work (84.9% vs. Claude’s 80.3% and Gemini’s 67.3%), and CyberGym (81.8% vs. Claude’s 73.1%). Claude Opus 4.7 leads on SWE-Bench Pro (64.3% vs. 58.6%). For a detailed comparison, see our earlier Anthropic Opus 4.7 analysis.

Is GPT-5.5 safe to use for enterprise workflows?

OpenAI has deployed its strongest set of safeguards to date with GPT-5.5, including targeted testing for advanced cybersecurity and biology capabilities, and feedback from nearly 200 trusted early-access partners. The cybersecurity capabilities are rated “High” under the Preparedness Framework, with stricter classifiers and a Trusted Access for Cyber program for verified defenders. Enterprise users should build a governance layer defining what requires human review before scaling agentic workflows.

What’s the first workflow in your business you’d hand off to an AI that can plan, execute, and check its own work? Let me know in the comments below.

References

- OpenAI. (2026, April 23). Introducing GPT-5.5.

- OpenAI Preparedness Framework v2

- Jason Fleagle on LinkedIn: OpenAI Releases GPT-5.5

About Jason Fleagle

Jason Fleagle is the Head of AI for Netsync and an AI and Growth Consultant working with global brands to help with their successful AI adoption and management. He helps humanize data — so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 500 AI projects and tools, and frequently conducts training workshops to help companies understand and adopt AI.