The “shadow Azure” era is over. OpenAI just dropped its three most powerful products directly into Amazon Bedrock. Here is why this is the biggest enterprise AI unlock of the year.

This is a very good announcement for enterprise businesses because it removes a lot of the friction that has been slowing some of the important areas of AI adoption.

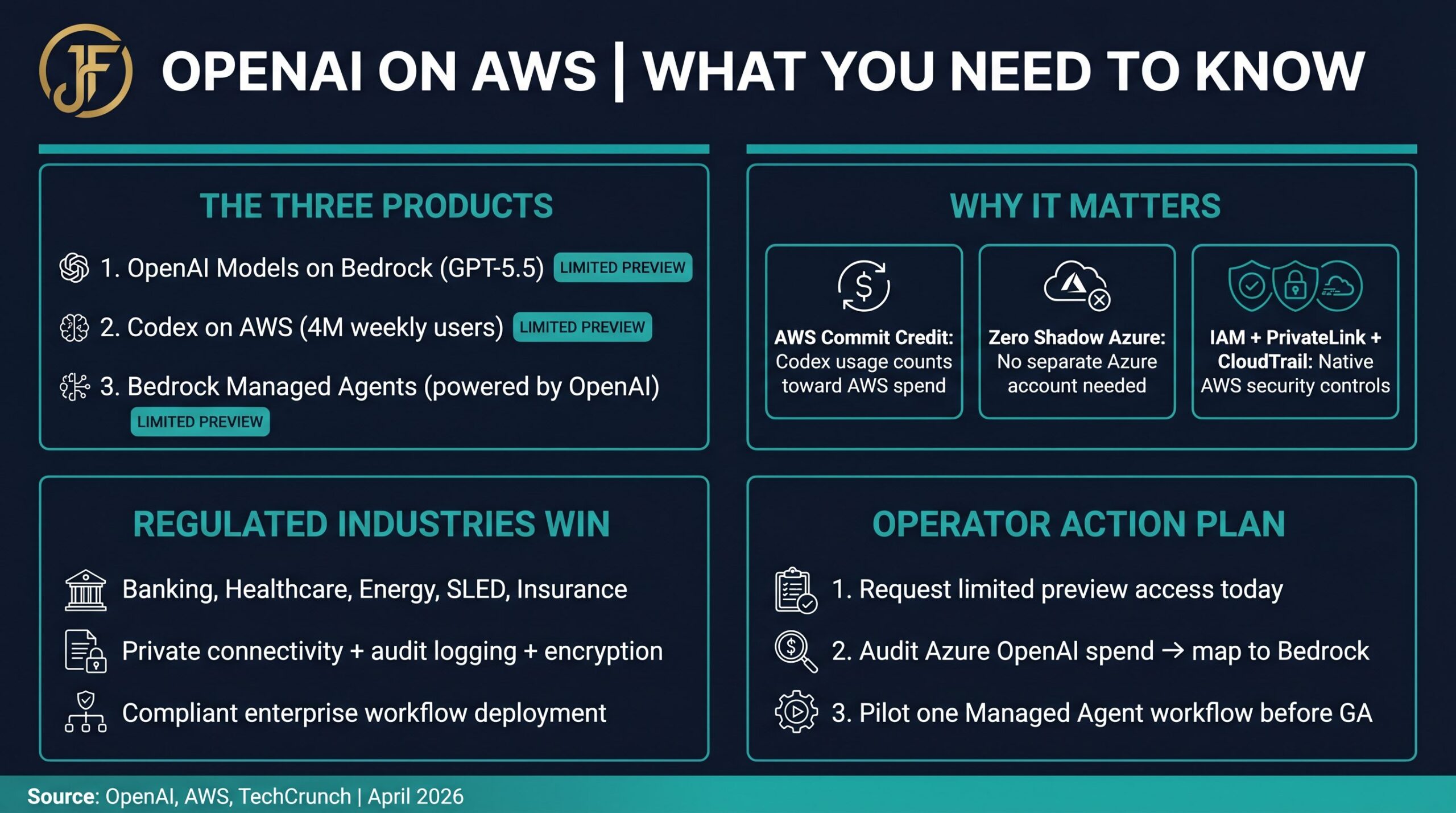

The big idea: enterprises can now use OpenAI’s frontier models, Codex, and managed agents inside AWS instead of treating OpenAI as a separate external platform. OpenAI says the launch includes OpenAI models on AWS, Codex on AWS, and Amazon Bedrock Managed Agents powered by OpenAI, all initially in limited preview.

Why Enterprises Should Care: The 5 Practical Wins

1. It Brings OpenAI Into the Enterprise’s Existing AWS Environment

Most large companies already have AWS identity, security, networking, logging, compliance, procurement, and billing workflows. This announcement means they can access OpenAI models through Amazon Bedrock while using controls they already understand, including IAM, PrivateLink, guardrails, encryption, and CloudTrail logging.

That matters because AI adoption often gets stuck not because the model is bad, but because legal, security, cloud, procurement, and compliance teams do not want “one more weird external AI thing” touching sensitive data.

2. It Gives Enterprises a Cleaner Path From Pilot to Production

OpenAI specifically frames this as a path from experimentation to production inside the AWS environments where important workloads already run.

That is huge. A lot of enterprise AI today is trapped in demo or POC land: a chatbot here, a prototype there, a cool proof of concept that never gets deployed. Or worse, they have worked with another partner or vendor that just did not deliver the results they wanted. Bedrock gives enterprises a more familiar production architecture: model access, orchestration, security, governance, logging, and integration with AWS services.

3. It Makes OpenAI Easier to Buy

This is one of the biggest practical wins. AWS says OpenAI model and Codex usage can apply toward existing AWS cloud commitments.

And with the improvements for GPT-5.5 and coming GPT-5.6 models, this could become a serious win for customers. For enterprise customers, that means AI spend can potentially fit into an existing AWS commercial agreement instead of requiring a totally separate procurement motion. That shortens sales cycles, reduces vendor onboarding friction, and makes CFO and procurement conversations easier.

4. Codex Becomes Much More Enterprise-Friendly

Codex on Bedrock means development teams can use OpenAI’s coding agent inside the AWS environment where they already build and operate. AWS says Codex will work through the Bedrock API, starting with Codex CLI, desktop app, and VS Code extension, with AWS credential-based authentication.

For businesses, that opens up real productivity use cases:

- Modernizing legacy applications

- Generating automated tests

- Refactoring and cleaning up code

- Explaining complex systems to new team members

- Speeding up DevOps and cloud engineering workflows

- Accelerating security remediation

- Helping developers work inside governed enterprise environments

This is not just “AI writes code.” It is “AI helps enterprise software teams move faster without leaving the guardrails.”

5. Managed Agents Make Production Agent Deployments More Realistic

The Bedrock Managed Agents piece may be the most important part strategically. AWS says these agents can have their own identity, log each action, run in the customer’s environment, and use Bedrock for inference.

That is exactly what enterprises need before they let agents do real work. They need to know:

- Who is the agent acting as?

- What permissions does it have?

- What systems can it touch?

- What actions did it take?

- Can security audit it?

- Can governance teams control it?

- Can it scale across business units?

This starts turning agents from “cool AI assistants” into actual enterprise workflow operators leveraging AI as much as possible.

Why This Is Especially Good for Regulated Industries

For banking, healthcare, energy, SLED, and insurance, the value is not just the model. The value is the AI model plus:

- AWS security controls

- Private connectivity via PrivateLink

- Audit logging via CloudTrail

- Identity management via IAM

- Encryption at rest and in transit

- Governance and compliance frameworks

- Existing cloud procurement agreements

- Proximity to enterprise data and applications

That is the difference between “we tried ChatGPT” and “we deployed AI into a compliant enterprise workflow.”

Operationalizing OpenAI on AWS: The NetSync Opportunity

For NetSync, this creates a very strong opportunity to serve enterprise organizations with a clean, compelling story:

“You can now bring OpenAI-grade intelligence into your AWS environment with enterprise-grade security, governance, and operational controls. Our team can help you design, integrate, secure, and operationalize it.”

Immediate service opportunities include:

- AWS Bedrock AI architecture design

- Secure OpenAI-on-AWS pilots

- Codex enablement for enterprise software teams

- Managed agent design and deployment

- Agent identity, permissions, and auditability frameworks

- Data integration with enterprise systems

- Security architecture with Cisco/Splunk observability layered in

- Production AI governance and operating models

This also pairs well with existing enterprise motion expertise: customers want simplified access, centralized management, visibility, consumption tracking, and predictable commercial structures.

The Simple Executive Takeaway

This announcement makes OpenAI enterprise-deployable at scale for new and existing AWS customers.

| Before | Now | |

|---|---|---|

| Model Access | Azure-only or separate OpenAI account | Native via Amazon Bedrock API |

| Security Controls | External platform, separate governance | IAM, PrivateLink, CloudTrail, Bedrock guardrails |

| Procurement | Separate OpenAI contract required | Counts toward existing AWS cloud commitment |

| Agent Deployment | Custom orchestration, no native identity | Managed Agents with identity, logging, governance |

| Developer Tooling | External Codex API, separate auth | Codex CLI/VS Code with AWS credential-based auth |

Operator Action Plan: 3 Steps to Take This Week

- Request limited preview access today. Go to the OpenAI on AWS announcement page and sign up for the Bedrock preview. Seats are limited and enterprise demand will be high.

- Audit your Azure OpenAI spend and map it to Bedrock. If you are currently running GPT-4 or GPT-5.5 workloads through Azure OpenAI Service, identify which ones can migrate to Bedrock. The security controls are equivalent or better, and the procurement is simpler.

- Pilot one Managed Agent workflow before GA. Pick your highest-volume repetitive knowledge work — report generation, code review, customer routing — and design a Managed Agent architecture around it. Build the governance layer now, before you scale.

Frequently Asked Questions

Is OpenAI on AWS available now?

As of April 28, 2026, all three products — OpenAI models on Bedrock, Codex on AWS, and Bedrock Managed Agents powered by OpenAI — are in limited preview. General availability timelines have not been announced. Enterprise customers should request preview access now to get ahead of the GA queue.

Do I still need an Azure account to use OpenAI?

No. Following the amended Microsoft/OpenAI partnership, OpenAI is now cloud-agnostic. AWS-native enterprises can access OpenAI models directly through Amazon Bedrock without maintaining a separate Azure subscription.

Does Codex usage count toward my AWS Enterprise Discount Program (EDP)?

AWS has confirmed that OpenAI model and Codex usage can apply toward existing AWS cloud commitments. The specific mechanics of EDP credit application will depend on your individual enterprise agreement. Engage your AWS account team to confirm the details for your organization.

What is the difference between Bedrock Managed Agents and standard OpenAI Agents?

Standard OpenAI Agents run on OpenAI’s infrastructure with OpenAI’s identity and logging systems. Bedrock Managed Agents run inside your AWS environment, use AWS IAM for identity, log every action to CloudTrail, and integrate natively with your existing AWS security and governance stack. For regulated industries, this distinction is critical.

Is this relevant if my organization is on Google Cloud instead of AWS?

Yes — Google Cloud (Vertex AI) is also expected to receive OpenAI model access as part of the broader cloud-agnostic strategy. AWS is first to market, but GCP integration is anticipated. Organizations on GCP should monitor the Vertex AI announcements closely over the next 60 to 90 days.

References

- OpenAI. (2026, April 28). OpenAI models, Codex, and Managed Agents come to AWS.

- Amazon. (2026, April 28). AWS and OpenAI announce expanded partnership.

- TechCrunch. (2026, April 28). Amazon is already offering new OpenAI products on AWS.

- Fleagle, J. (2026, April 29). OpenAI Moves Into AWS: GPT-5.5, Codex, & AI Agents Are Native on AWS. LinkedIn.

About Jason Fleagle

Jason Fleagle is the Head of AI for NetSync and an AI and Growth Consultant working with global brands to help with their successful AI adoption and management. He helps humanize data so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 500 AI projects and tools, and frequently conducts training workshops to help companies understand and adopt AI. Connect with Jason on LinkedIn.