Anthropic Releases Opus 4.7: The “Safe” Prelude to Mythos

Anthropic just released a model they openly admit is less capable than their best one — and that’s actually the point.

Claude Opus 4.7 is now generally available. It’s a massive leap forward for agentic workflows, coding, and long-horizon tasks. But the real story isn’t just what Opus 4.7 can do. It’s what Anthropic deliberately trained it not to do.

Opus 4.7 is the safety test bed that has to work before their “superhacker” model, Mythos Preview, ever goes public.

Here is what operators need to know about the new model, the Mythos shadow, and the dual-use dilemma of AI cyber warfare.

What Is Claude Opus 4.7? Capabilities and Benchmarks

Despite being throttled in specific areas, Opus 4.7 is the most powerful generally available Claude model on the market today. It’s priced exactly the same as Opus 4.6 — $5 per million input tokens and $25 per million output tokens — but delivers significant upgrades for developers and operators building agentic AI systems.

Key Performance Improvements Over Opus 4.6

- Agentic Coding: Opus 4.7 achieved a 13% lift on a 93-task coding benchmark compared to Opus 4.6, including solving four tasks that neither Opus 4.6 nor Sonnet 4.6 could crack.

- Long-Horizon Tasks: It can think for hours without looping. In one test by Bolt.new, it solved an “unbeatable” coding challenge by thinking for 7.5 hours straight — with individual thinking blocks exceeding an hour. Prior models that think that long tend to loop. This one thought its way out.

- Context Survival: When a model runs long enough, it has to compress its context, which usually kills the run. Opus 4.7 compressed its context 3–4 times on a single task and kept executing against the original plan each time.

- Self-Verification: It catches its own logical faults during the planning phase and pressure-tests its own output before handing it to you. In Bolt.new’s evaluations, it independently identified flaws in test logic that their own team hadn’t caught.

- App Building: Bolt.new’s internal evals showed Opus 4.7 outperformed Opus 4.6 across their app-building benchmarks, with gains reaching ~10% in the best cases.

- Knowledge Work: Clear gains on tasks requiring synthesis of large volumes of unstructured information — legal filings, financial documents, compliance reviews. In one test, it processed hundreds of source documents, identified key issues, built a strategy, and produced a step-by-step instruction set with written justifications for every decision.

For operators building apps or automating complex workflows, Opus 4.7 is a clear upgrade. But its release strategy is what makes it truly significant.

The Mythos Shadow: Why Anthropic Is Deliberately Throttling Cyber Capabilities

To understand Opus 4.7, you have to understand Claude Mythos Preview (Project Glasswing).

Mythos is Anthropic’s unreleased model that autonomously found thousands of zero-day vulnerabilities across major operating systems and browsers. It was so dangerous that Anthropic restricted access to a tiny group of elite tech giants — Microsoft, Google, and Apple — and sparked emergency meetings with the Trump administration and bank CEOs about the security risks of deploying it at scale.

Anthropic realized they couldn’t just release Mythos to the public. The risk of bad actors using it to automate exploit creation at scale was too high.

So, they built Opus 4.7.

During training, Anthropic experimented with efforts to “differentially reduce” Opus 4.7’s cyber capabilities. They deliberately made it worse at hacking than Mythos. They are now using Opus 4.7 to test new safeguards in the wild — safeguards that automatically detect and block requests indicating prohibited or high-risk cybersecurity uses. What they learn from real-world deployment will inform the eventual broad release of Mythos-class models.

The Dual-Use Dilemma: Same Model, Different Hands

This creates a fascinating and challenging dynamic for enterprise security teams.

The same model that can autonomously patch a vulnerability can be inverted to exploit it. Anthropic is trying to thread the needle by releasing a highly capable model for general work (Opus 4.7) while keeping the true cyber weapon (Mythos) locked down.

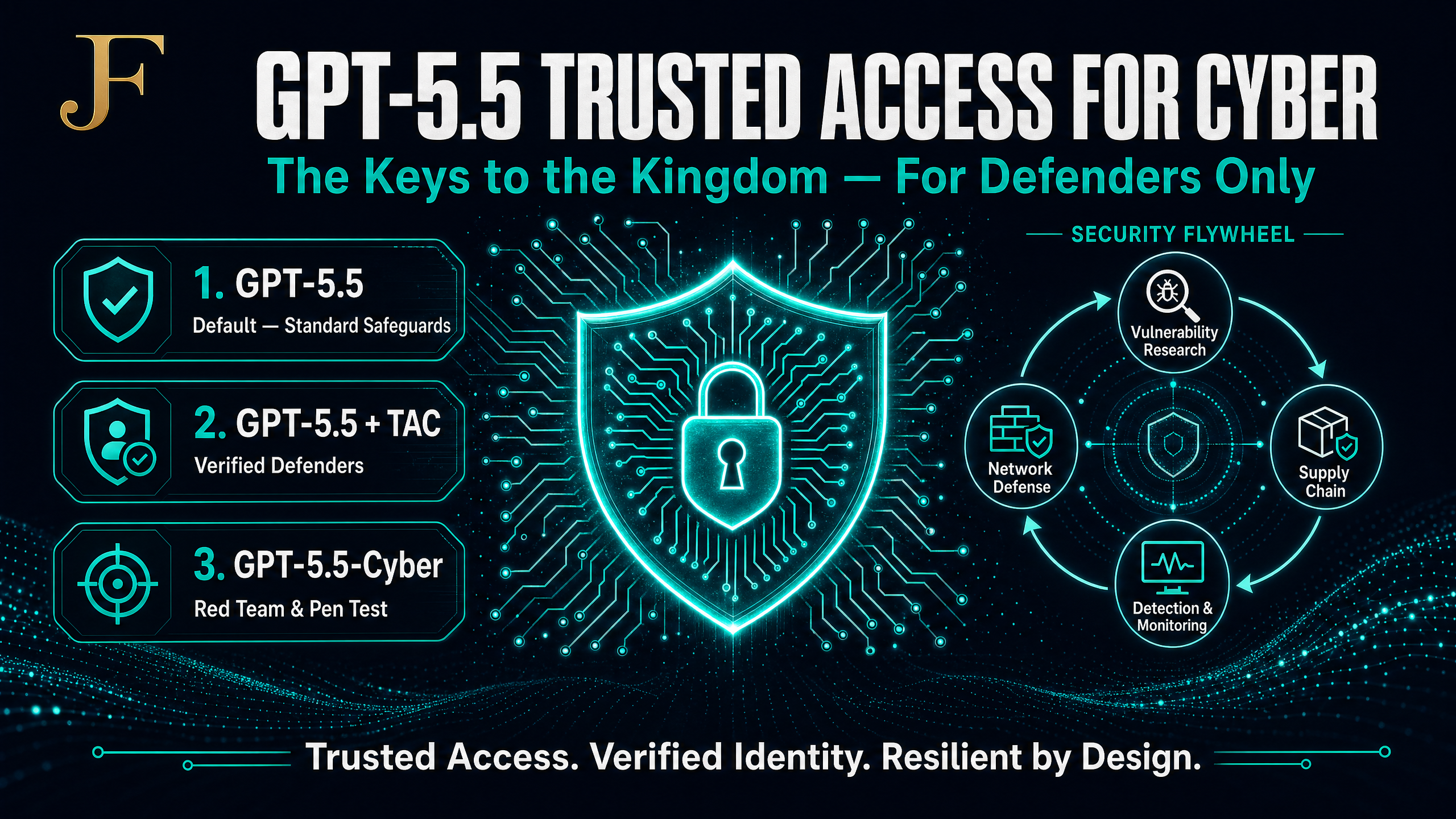

But they know legitimate security professionals need these tools for vulnerability research, penetration testing, and red-teaming. That’s why they launched the Cyber Verification Program. Verified security professionals can apply to use Opus 4.7 for these specific, high-risk use cases under a formal verification framework.

Anthropic’s Responsible Scaling Playbook vs. OpenAI’s Approach

Anthropic is executing their “responsible scaling” strategy in real-time, and it’s worth understanding the full sequence:

- Build a highly capable, potentially dangerous model (Mythos).

- Restrict access to a trusted few while assessing risks.

- Train a slightly less capable version with built-in safeguards (Opus 4.7).

- Release the safer version publicly to test the safeguards in the wild.

- Learn, iterate, and eventually release the more powerful model when it’s deemed safe.

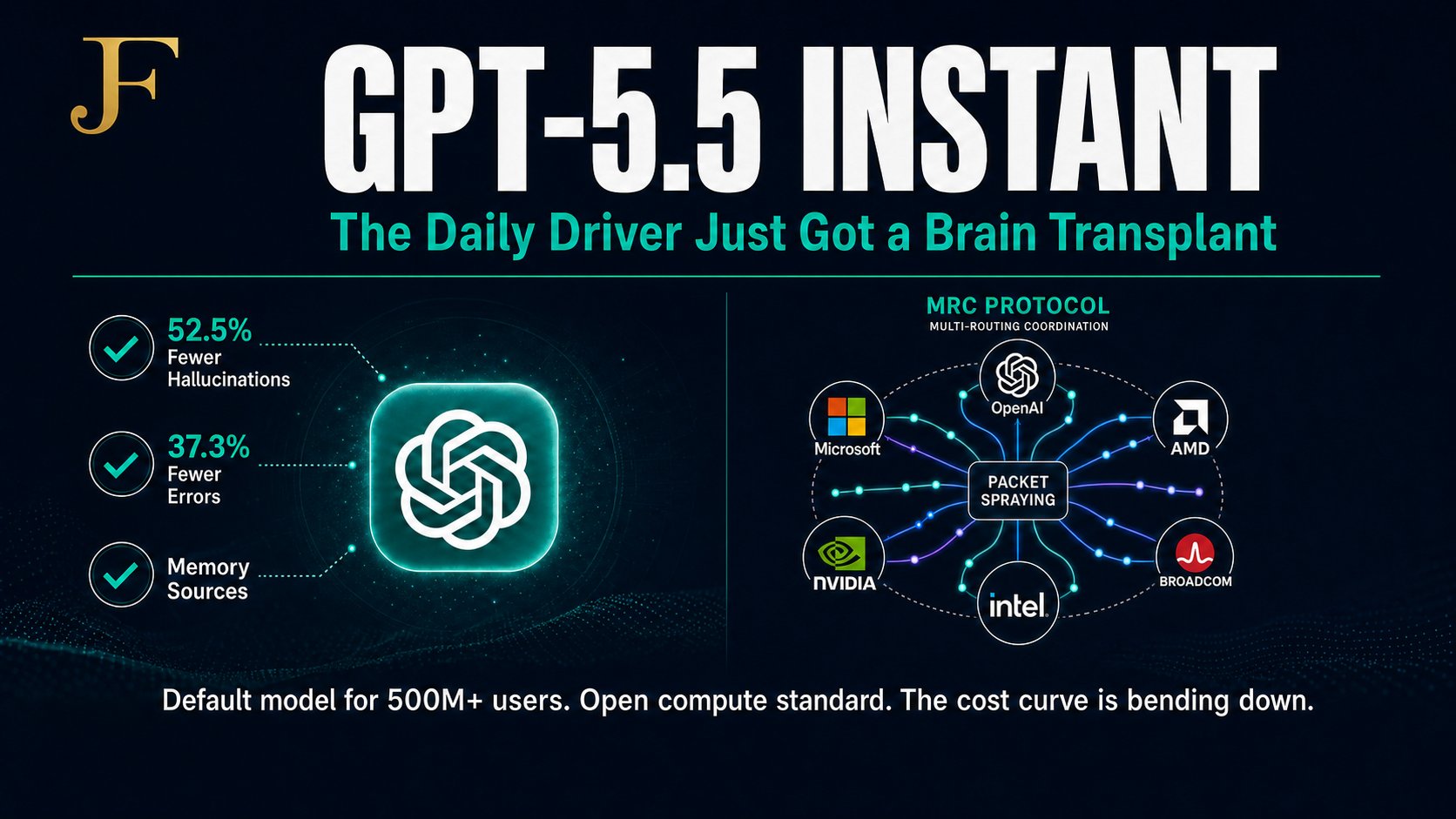

Is this the right approach, or is it safety theater? Only time will tell. But it’s a stark contrast to OpenAI’s recent release of GPT-5.4-Cyber, which they gave to thousands of verified defenders right out of the gate through their Trusted Access for Cyber (TAC) program. Two philosophies, same arms race.

Your Operator Action Plan

Here is what you need to do right now:

- Upgrade Your Agentic Workflows: If you are using Claude for agentic coding, complex data synthesis, or long-horizon tasks, switch to Opus 4.7 immediately. The cost is identical to Opus 4.6, but the reasoning depth, context survival, and self-verification capabilities are significantly better.

- Apply for Cyber Verification: If you run a security team, apply for Anthropic’s Cyber Verification Program now. You need to start testing these capabilities before Mythos-class models become the baseline threat — and the baseline tool for your adversaries.

- Model the Threat Surface: Assume that bad actors will eventually get their hands on Mythos-level capabilities, either through a leak, an open-source equivalent, or a less scrupulous competitor. Start shifting your security strategy from static audits to continuous, AI-driven red-teaming.

How Does Opus 4.7 Compare to Other Leading Models?

For a broader view of the AI model landscape, Opus 4.7 competes directly with OpenAI’s GPT-4.1 and Google’s Gemini 2.5 Pro in the enterprise coding and agentic workflow category. Its unique differentiator is the combination of long-horizon context survival and self-verification — capabilities that matter most when you are running complex, multi-step automations rather than single-turn queries.

For more context on the AI cyber arms race between Anthropic and OpenAI, read our breakdown of GPT-5.4-Cyber and the OpenAI vs. Anthropic Mythos rivalry. For a broader view of how agentic AI is reshaping enterprise workflows, see our analysis of Meta’s Muse Spark multi-agent AI launch.

Frequently Asked Questions

What is Claude Opus 4.7?

Claude Opus 4.7 is Anthropic’s most powerful generally available AI model, released April 16, 2026. It delivers major improvements in agentic coding, long-horizon task execution, context survival, and self-verification compared to Opus 4.6, at the same price point of $5/M input and $25/M output tokens.

How does Opus 4.7 differ from Claude Mythos Preview?

Claude Mythos Preview is Anthropic’s most powerful model but remains restricted to a small group of elite tech companies due to its advanced cyber capabilities. Opus 4.7 was deliberately trained with reduced cyber capabilities and built-in safeguards, making it safe for general availability while Anthropic tests its safety systems in the real world.

What is Project Glasswing?

Project Glasswing is Anthropic’s initiative to responsibly deploy advanced AI cybersecurity capabilities. It involves restricting access to Mythos Preview, testing safeguards on less capable models like Opus 4.7, and establishing a Cyber Verification Program for legitimate security professionals.

Should I upgrade from Claude Opus 4.6 to Opus 4.7?

Yes, for most enterprise use cases. The pricing is identical, and Opus 4.7 delivers measurable improvements in agentic coding (13% benchmark lift), long-horizon task execution, and context survival. The upgrade is particularly valuable for teams running complex, multi-step AI workflows.

References

- Anthropic: Introducing Claude Opus 4.7

- CNBC: Anthropic releases Claude Opus 4.7, a less risky model than Mythos

- Bolt.new: 7 things Opus 4.7 does better than 4.6

- Anthropic: Project Glasswing

- Anthropic: Cyber Verification Program

About Jason Fleagle

Jason Fleagle is an AI and Growth Consultant and Head of AI for Netsync. He helps businesses leverage artificial intelligence, automation, and digital marketing to drive measurable ROI and scale operations. Follow the AI Pathfinder newsletter for weekly breakdowns of what’s actually moving in AI — and what it means for you.

If you want to build AI systems that actually drive revenue and operational leverage, let’s talk.