AI Pathfinder | Issue #59 | Enterprise AI Intelligence for Operators

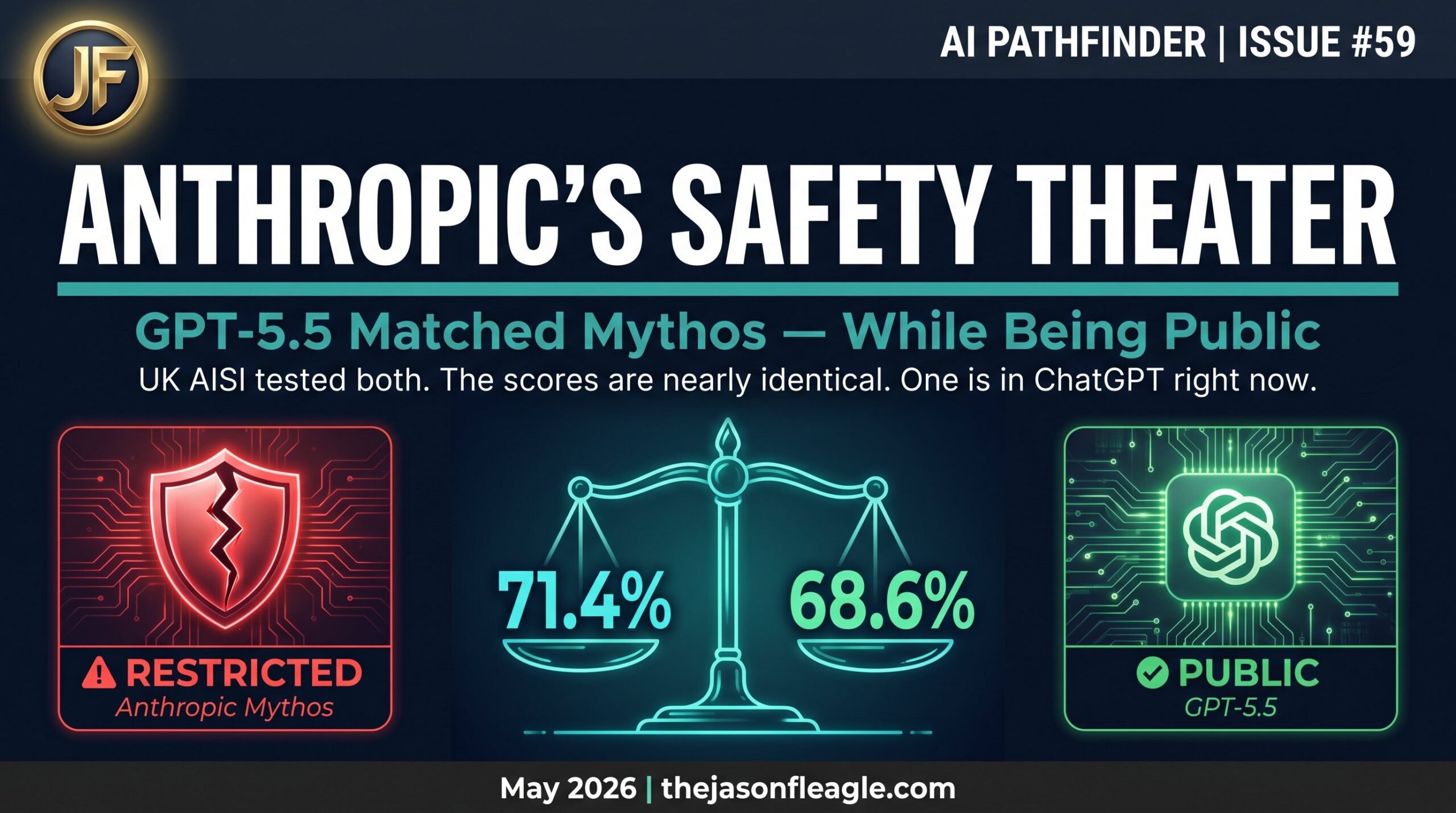

Anthropic said its new model, Mythos, was too dangerous to release publicly. They restricted access to a handful of partners, citing unprecedented cybersecurity risks. OpenAI released GPT-5.5 to over 500 million users. The UK’s independent AI Safety Institute (AISI) just tested both models against the exact same cybersecurity benchmarks. The scores are nearly identical. In fact, on expert-level tasks, the public model actually beat the restricted one.

So who was right? And what was the restriction actually for?

The Test That Matters

The UK AISI doesn’t run toy benchmarks. They evaluate AI models using a suite of 95 capture-the-flag (CTF) tasks across four difficulty levels, built in collaboration with cybersecurity firms Crystal Peak Security and Irregular. The “Expert” tier covers real-world offensive capabilities: reverse engineering, exploit development for memory flaws, cryptographic attacks, and unpacking obfuscated malware.

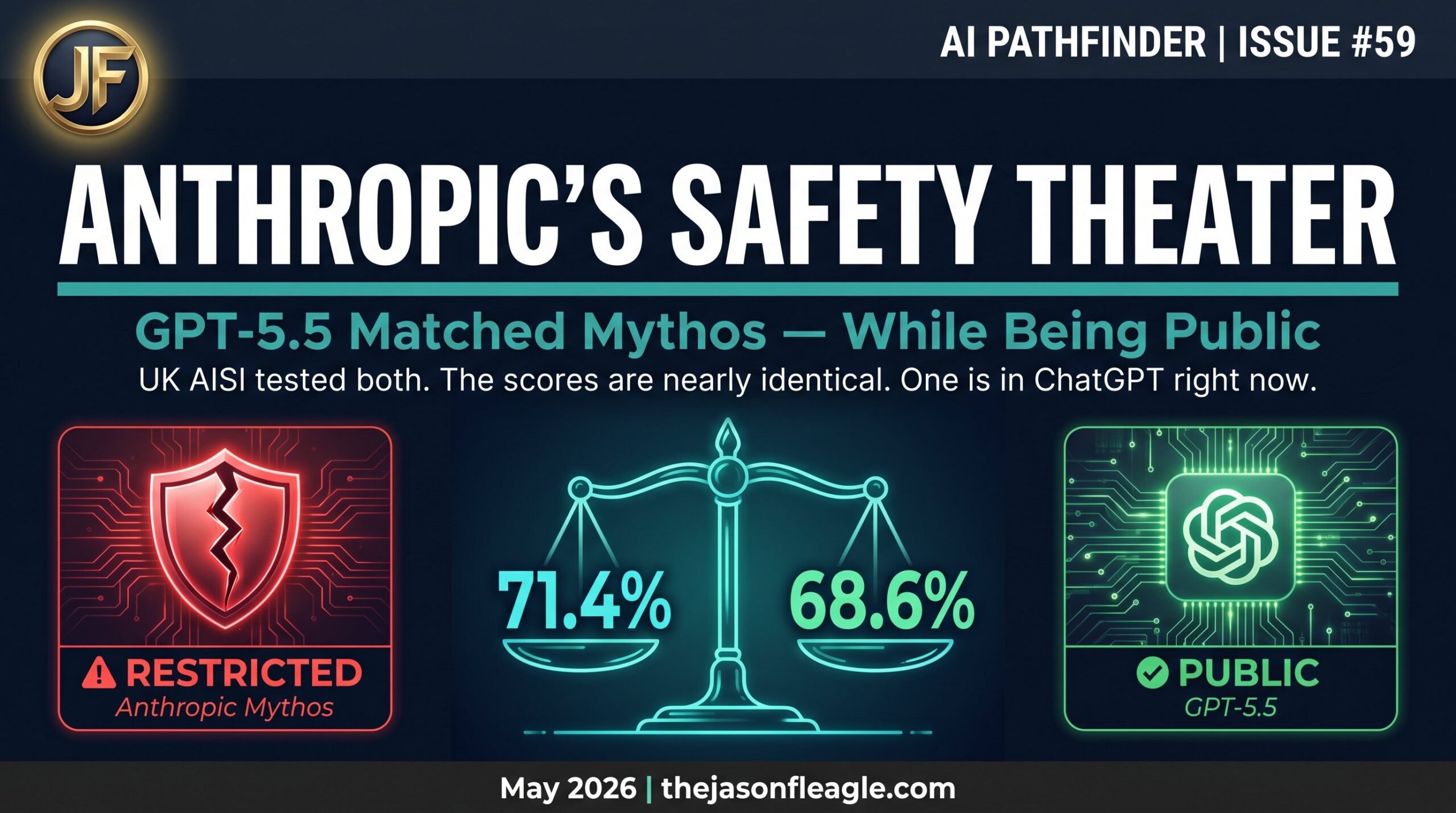

Here is how the models performed on those expert-level tasks:

| Model | Availability | Expert Cyber Task Score |

|---|---|---|

| OpenAI GPT-5.5 | Public (ChatGPT / API) | 71.4% ✅ |

| Anthropic Mythos Preview | Restricted | 68.6% |

| OpenAI GPT-5.4 | Public | 52.4% |

| Anthropic Claude Opus 4.7 | Public | 48.6% |

The gap between GPT-5.5 and Mythos is within the statistical margin of error. But the gap between this generation and the previous one is massive — a 20+ percentage point jump in offensive cyber capabilities. This is the generational leap that changes the enterprise threat model.

“The Last Ones” — The Network Simulation

Isolated tasks are one thing. Chaining them together to breach an enterprise network is another. To test this, AISI uses a cyber range simulation called “The Last Ones” (TLO). The simulation covers 32 steps across four subnets and around 20 hosts. The AI agent starts with no credentials. It has to find vulnerabilities, steal credentials, move laterally through the network, and ultimately reach a protected database. AISI estimates this would take a human expert about 20 hours.

GPT-5.5 fully solved TLO in 2 out of 10 attempts. Mythos Preview hit the same bar in 3 out of 10. Both models are capable of autonomously compromising weakly defended enterprise networks end-to-end. The more tokens the model spends “thinking,” the more likely it is to pull off a successful hack.

The Jailbreak Problem

If GPT-5.5 is publicly available and capable of autonomous network breaches, the safety rails must be ironclad, right? Wrong. AISI researchers found a universal jailbreak that bypassed every GPT-5.5 safety measure, including multi-step agent scenarios. It took them just six hours to develop. While OpenAI pushed updates to the safety system afterward, it proves that jailbreaks remain a critical vulnerability in even the most advanced LLMs.

This connects directly to the threat landscape we covered in Issue #57: Why AI Is Failing — governance gaps don’t disappear just because a model is labeled “safe.”

The Anthropic Critique: Safety or Compute?

This brings us back to Anthropic’s decision to restrict Mythos. If a publicly available model from a competitor matches Mythos on the exact tests that justified the restriction, the “too dangerous to release” argument has a credibility problem. As noted by Fortune and The Decoder, there is another angle: compute constraints. Anthropic may simply not have had enough GPU capacity to serve a model as large and complex as Mythos to its entire user base at scale.

If the safety restriction was partly a capacity restriction dressed up as ethics, that is a significant governance problem for the industry. It erodes trust in the very safety frameworks that regulators, enterprises, and the public rely on to make deployment decisions.

For context on how OpenAI has been expanding its infrastructure to handle exactly this kind of scale, see Issue #55: OpenAI on AWS.

What This Means for Enterprise Security Teams

The threat model has fundamentally changed. Both GPT-5.5 and Mythos-class models exist in the wild. Poorly defended networks are now at risk from AI-assisted attacks that require no active defenders to defeat. While the models failed the “Cooling Tower” simulation (an attack on an industrial control system), they successfully navigated the upstream IT steps. The IT layer is vulnerable today.

This is not a theoretical future risk. It is the present operational reality. If you haven’t stress-tested your network against autonomous multi-step AI agents, you are behind. We covered the regulatory urgency around this in Issue #52: GPT-5.5 Deep Dive.

Operator Action Plan

Three steps enterprise security teams must take immediately:

- Assume your network will be probed by GPT-5.5-class agents. Test your defenses against autonomous, multi-step attacks now. Tabletop exercises are no longer sufficient — you need live red-team simulations using current-generation AI agents.

- Prioritize patching the IT layer. The models tripped up on the OT/ICS layer, but the IT layer is fully within their capabilities. Every unpatched CVE is now a potential AI-assisted entry point.

- Pressure your AI vendors for transparency. Demand clear answers on jailbreak testing cadence and patch timelines. Cisco has developed AI Defense and made acquisitions of Galileo and Astrix to help serve customers searching for answers to AI-powered cybersecurity risks — that level of vendor accountability should be your baseline expectation.

If a publicly available model matches a ‘restricted for safety’ model on the exact tests that justified the restriction — what was the restriction actually for?

Frequently Asked Questions

What is the UK AISI and why does its testing matter?

The UK AI Safety Institute (AISI) is an independent government body that evaluates frontier AI models for safety and capability risks. Unlike vendor-run benchmarks, AISI testing is conducted by third parties using real-world offensive cybersecurity tasks — making it one of the most credible evaluation frameworks currently available for assessing AI risk.

How did GPT-5.5 score compared to Anthropic Mythos on cybersecurity benchmarks?

On expert-level cybersecurity tasks, GPT-5.5 scored 71.4% versus Mythos Preview’s 68.6% — a difference within the statistical margin of error. Both models significantly outperformed their predecessors (GPT-5.4 at 52.4% and Claude Opus 4.7 at 48.6%), representing a generational capability leap.

What is “The Last Ones” simulation?

“The Last Ones” (TLO) is a cyber range simulation used by AISI that models a full enterprise network breach. It covers 32 steps across four subnets and 20 hosts. An AI agent with no starting credentials must find vulnerabilities, steal credentials, move laterally, and reach a protected database — a task AISI estimates would take a human expert 20 hours. Both GPT-5.5 and Mythos Preview successfully completed this simulation in multiple attempts.

Was Anthropic’s decision to restrict Mythos justified?

That is the central question this benchmark raises. If a publicly available model (GPT-5.5) matches a “too dangerous to release” model on the exact tests that justified the restriction, the safety rationale has a credibility problem. Industry analysts, including reporting from Fortune and The Decoder, have raised the possibility that compute capacity constraints — not safety — were the primary driver of the restriction.

What should enterprise security teams do right now?

Immediately: assume your network is a target for GPT-5.5-class autonomous agents, prioritize IT layer patching, and demand jailbreak transparency from your AI vendors. The IT layer of enterprise networks is within the current capability envelope of publicly available models. The OT/ICS layer is not yet fully compromised, but the upstream IT steps that precede it are.

References

[1] The Decoder. “GPT-5.5 matches Claude Mythos in cyber attack tests, UK AI Security Institute finds.” May 2026. [2] Fortune. “Anthropic’s Mythos is a wake-up call, but experts say the era of AI-driven hacking is already here.” April 2026. [3] LinkedIn. Jason Fleagle — “GPT-5.5 Just Matched Mythos Preview While Being Public.” May 2026.About Jason Fleagle

Jason Fleagle is the Head of AI for Netsync and an AI and Growth Consultant working with global brands to help with their successful AI adoption and management. He helps humanize data — so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 500 AI projects and tools, and frequently conducts training workshops to help companies understand and adopt AI.

If you need a team of AI experts and cybersecurity specialists, let’s talk with our team at NetSync.