AI Pathfinder | Issue #60 | Enterprise AI Intelligence for Operators

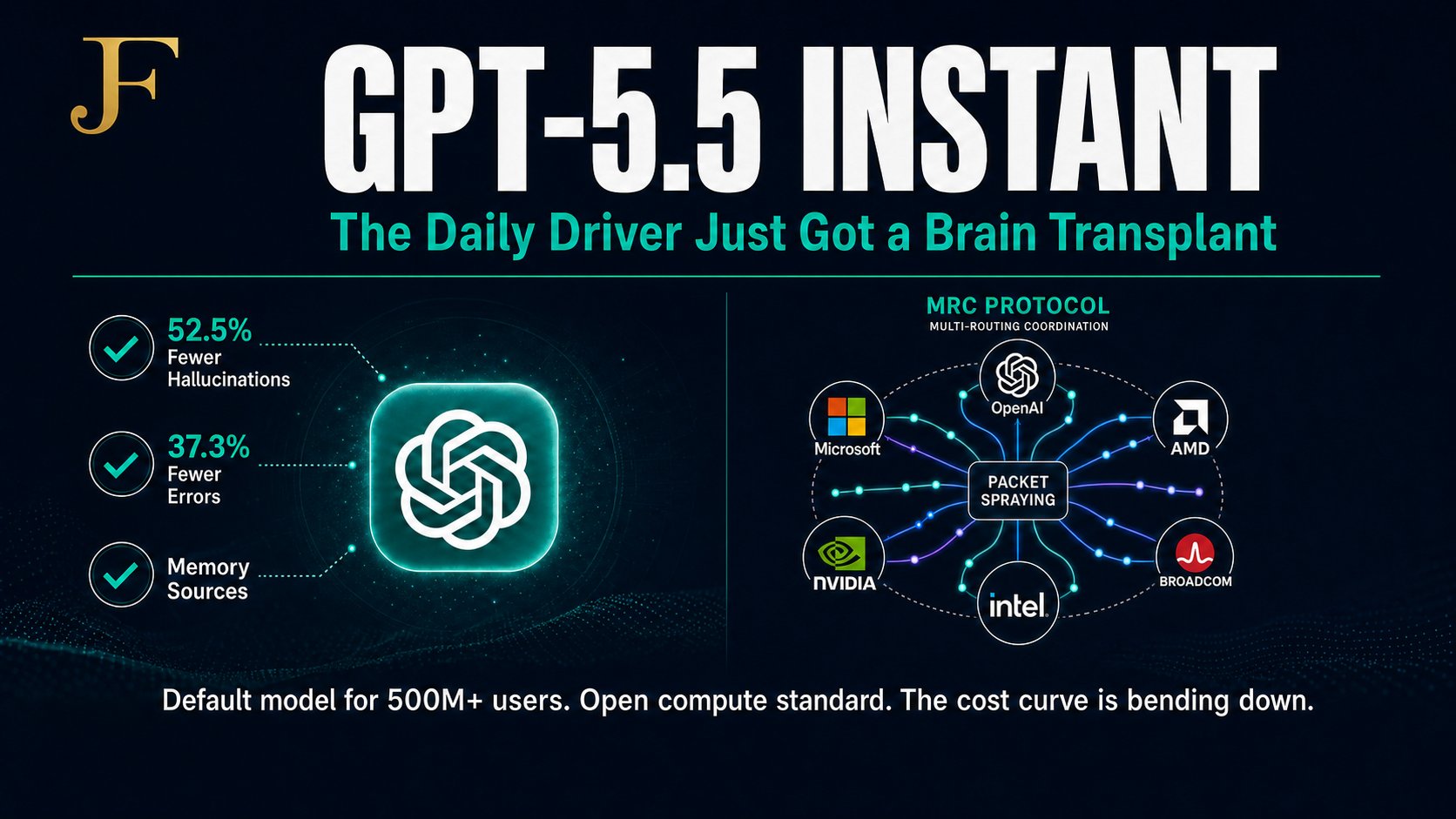

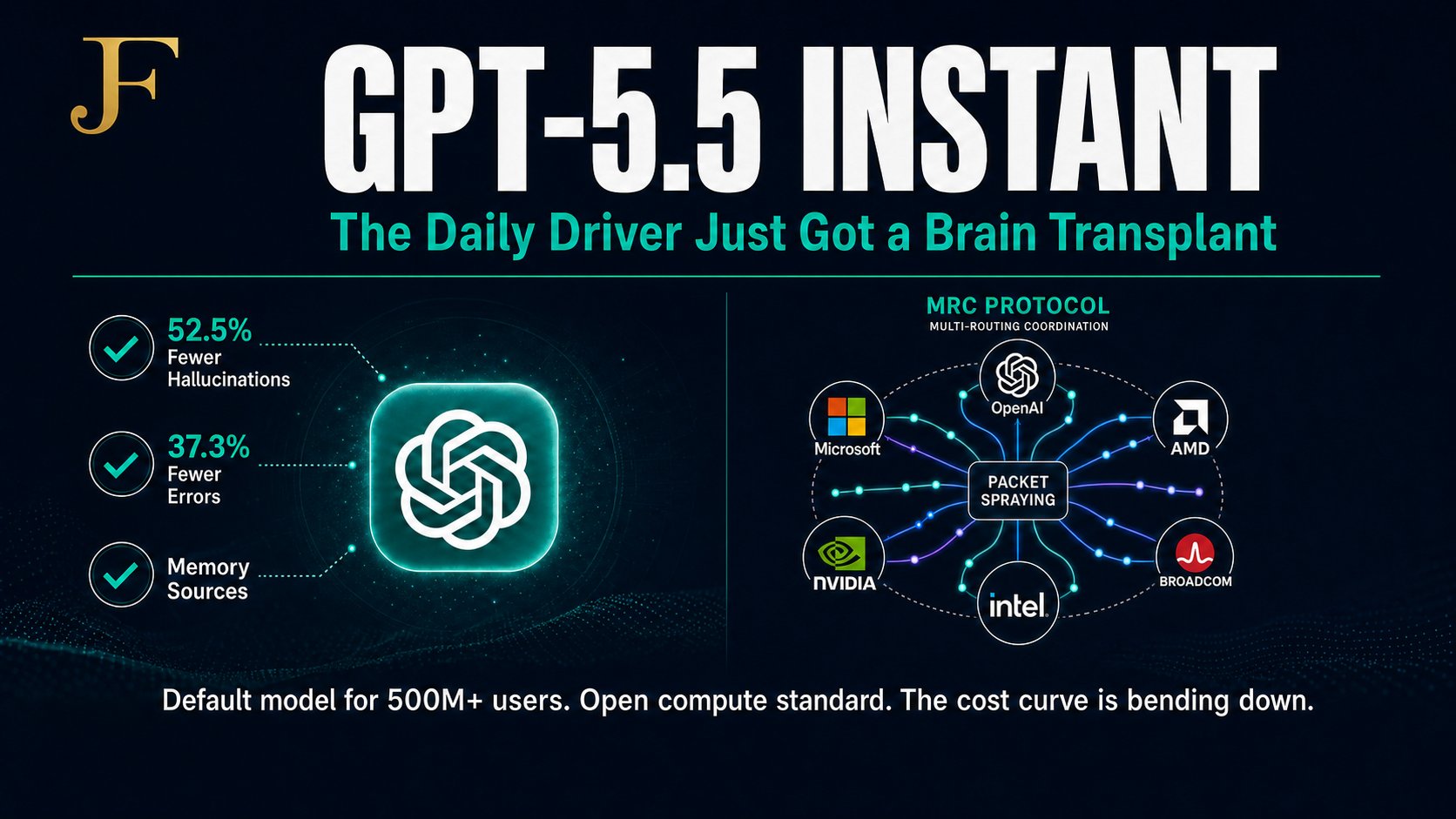

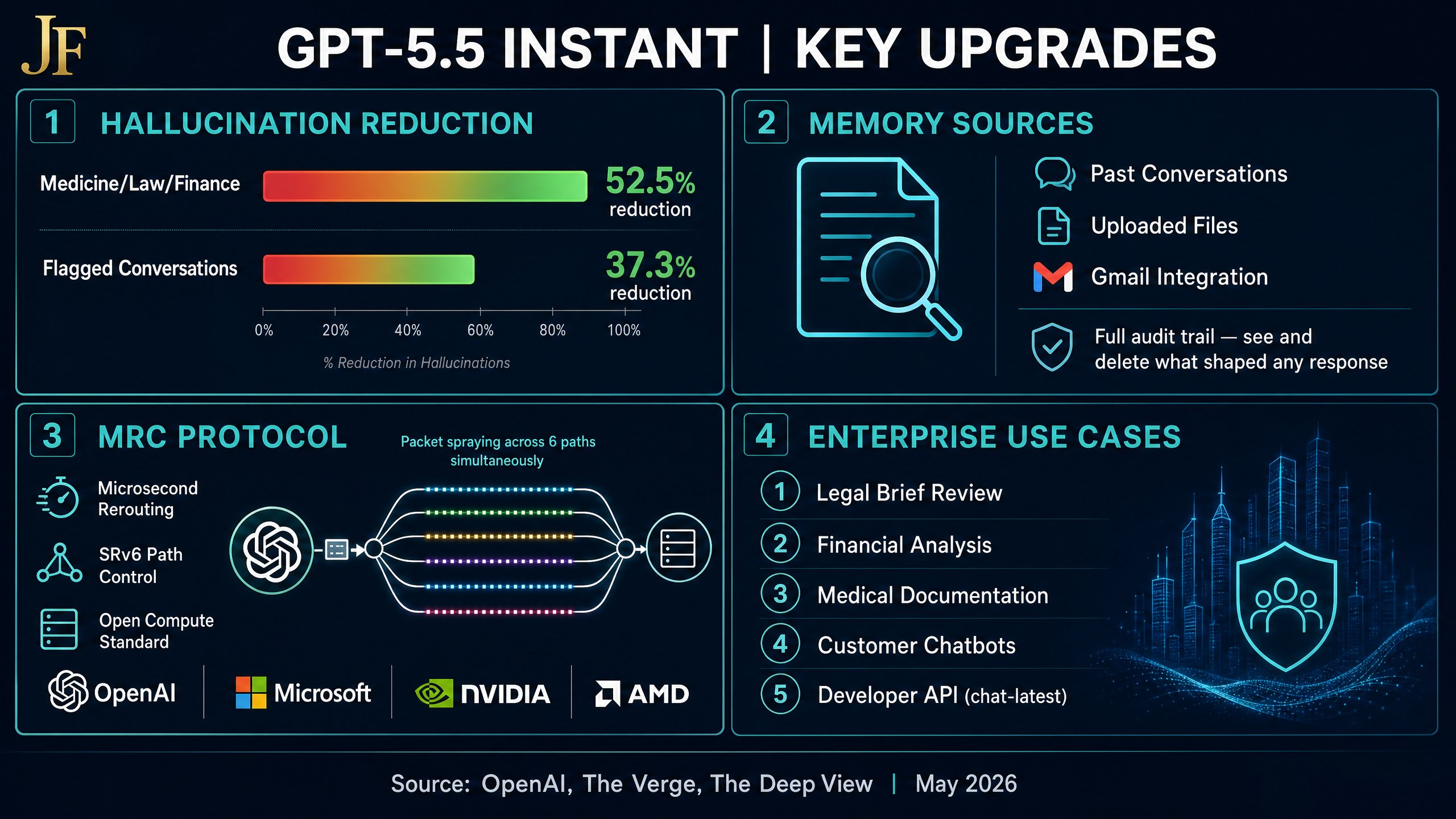

The model 500 million people use every day just got a major upgrade. Not a new tier. Not a paid feature. The default. That’s the scale that makes a 52.5% hallucination reduction meaningful. OpenAI just replaced ChatGPT’s default model for 500M+ users — and quietly published the compute protocol that makes every future model faster and cheaper to train.

Feature 1: GPT-5.5 Instant — What Changed

This is not a model swap buried in a changelog. It is a default replacement for the largest AI user base on the planet. The four core improvements are:

- Factuality — 52.5% fewer hallucinations on medicine, law, and finance; 37.3% fewer errors on flagged conversations.

- Smarter defaults — better STEM reasoning, better image analysis, better web search decisions.

- Tighter responses — less verbosity, fewer unnecessary follow-ups, no gratuitous emojis.

- Personalization — pulls from past chats, files, and Gmail; memory sources show exactly what context was used.

The Memory Sources Feature: The Enterprise Compliance Unlock

This is the feature that matters most for regulated industries. You can now see exactly what context shaped a response — and delete or correct it. For legal, finance, and healthcare teams, this is the audit trail they have been asking for. Accuracy and context retrieval are non-negotiable in these industries, and memory sources finally make that visible.

This capability connects directly to the enterprise deployment challenges we analyzed in Issue #56: The Micro-Productivity Trap — the missing piece was always auditability, not capability.

The Benchmark: GPT-5.5 Instant vs. GPT-5.3 Instant

| Metric | GPT-5.5 Instant | GPT-5.3 Instant (Prior Default) |

|---|---|---|

| Hallucination Rate (High-Stakes: Medicine / Law / Finance) | -52.5% ✅ | Baseline |

| Flagged Conversation Error Rate | -37.3% ✅ | Baseline |

| Memory Sources (Context Audit Trail) | Yes | No |

| Gmail / File / Chat Integration | Yes | Limited |

| Response Verbosity | Reduced | Higher |

Feature 2: The MRC Protocol — The Infrastructure Play

Buried in the same week’s announcements: OpenAI, Microsoft, AMD, Broadcom, Nvidia, and Intel jointly published MRC (Multipath Reliable Connection) as an open compute standard under the Open Compute Project. This is the networking protocol used to train GPT-5.5 itself.

What MRC actually does: packet spraying across hundreds of simultaneous paths, microsecond failure rerouting, and SRv6 path control. It is already running in production at Oracle Abilene TX and Microsoft Fairwater. The technical result is that AI training clusters can now use commodity networking hardware at supercomputer-grade reliability.

Why MRC Is a Bigger Deal Than It Sounds

Most open standards announcements are competitive positioning. This one is different. The six companies publishing MRC together represent the entire AI compute supply chain — chip design, networking, cloud infrastructure, and model training. Publishing it as an open standard under the Open Compute Project means every hyperscaler and enterprise data center can implement it without licensing fees.

The implication: the cost curve for AI infrastructure is bending down. Not eventually — now. Every future model trained on MRC-enabled infrastructure will be faster and cheaper to produce. That acceleration compounds. For the context on how OpenAI has been building out this infrastructure layer, see Issue #55: OpenAI on AWS.

What This Means for Enterprise Operators

The daily driver is now reliable enough for regulated use cases. Memory sources give compliance teams the audit trail they need. MRC means the models will keep improving faster and cheaper — and the speed of innovation and adoption will only increase. If your organization has been waiting for “good enough” accuracy before deploying ChatGPT in high-stakes workflows, that threshold has moved.

This is the same dynamic we covered in Issue #52: GPT-5.5 Deep Dive — the capability jumps are now arriving faster than most enterprise procurement cycles can track.

5 Immediate Enterprise Use Cases

- Legal brief review with memory sources providing a full audit trail of context used.

- Financial analysis with hallucination-reduced outputs on high-stakes calculations.

- Medical documentation with 52.5% fewer factual errors on clinical content.

- Customer-facing chatbots with personalization drawn from past interactions and files.

- Developer tooling via the

chat-latestAPI endpoint to ensure your integrations always use the current default model.

Operator Action Plan

Three steps to take this week:

- Enable Gmail + memory sources for your team’s ChatGPT accounts. Ensure it is done with the right AI guardrails in place — memory sources are powerful, but they also mean the model is retaining context across sessions. Audit what it is storing.

- Test GPT-5.5 Instant on your highest-stakes prompts (legal, finance, medical) and compare results against your current model. The 52.5% hallucination reduction is a headline number — validate it against your specific use cases.

- Update your API integrations to

chat-latestto ensure you are always running the current default model without manual version management.

If the default model is now reliable enough for medicine, law, and finance — what’s still stopping your team from deploying it?

Frequently Asked Questions

What is GPT-5.5 Instant and how is it different from GPT-5.5?

GPT-5.5 Instant is the new default model for all ChatGPT users — it replaced the previous default (GPT-5.3 Instant) without requiring users to switch settings or pay for an upgrade. It is optimized for speed and everyday use, with 52.5% fewer hallucinations on high-stakes topics and a new memory sources feature that shows users exactly what context shaped each response.

What are memory sources in ChatGPT?

Memory sources is a new transparency feature that shows users which pieces of stored context — past conversations, uploaded files, connected Gmail — were used to generate a specific response. Users can view, edit, or delete any stored context. For enterprise compliance teams, this is the audit trail that makes AI use in regulated industries defensible.

What is the MRC Protocol and who published it?

MRC (Multipath Reliable Connection) is an open networking standard published jointly by OpenAI, Microsoft, Nvidia, AMD, Broadcom, and Intel under the Open Compute Project. It enables AI training clusters to spray data packets across hundreds of simultaneous network paths with microsecond failure rerouting — dramatically improving the reliability and cost-efficiency of large-scale AI training. It was used to train GPT-5.5 and is already deployed at Oracle and Microsoft data centers.

How does GPT-5.5 Instant affect enterprise AI deployments?

For enterprises, the key changes are: (1) the accuracy bar for high-stakes use cases has materially improved, (2) memory sources provide the audit trail required for compliance in legal, finance, and healthcare, and (3) the chat-latest API endpoint now automatically routes to GPT-5.5 Instant, meaning existing integrations get the upgrade without code changes.

Will MRC make AI cheaper for enterprises?

Indirectly, yes. MRC reduces the infrastructure cost of training large models by making AI compute clusters more efficient. As an open standard, it is available to every hyperscaler and enterprise data center without licensing fees. Over time, this bends the cost curve for AI training downward — which means future model generations will be cheaper to produce and, eventually, cheaper to access via API.

References

[1] OpenAI. “GPT-5.5 Instant — Official Announcement.” May 2026. [2] The Verge. “OpenAI makes GPT-5.5 Instant the default ChatGPT model.” May 2026. [3] OpenAI. “MRC: Supercomputer Networking for AI.” May 2026. [4] LinkedIn. Jason Fleagle — “GPT-5.5 Instant: The Daily Driver Just Got a Brain Transplant.” May 2026.About Jason Fleagle

Jason Fleagle is the Head of AI for Netsync and an AI and Growth Consultant working with global brands to help with their successful AI adoption and management. He helps humanize data — so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 500 AI projects and tools, and frequently conducts training workshops to help companies understand and adopt AI.

If you need a team of AI experts to help your organization deploy GPT-5.5 Instant safely and at scale, let’s talk with our team at NetSync.