A year after R1 wiped $600 billion off Nvidia’s market cap, DeepSeek is back with the largest open-weights model ever built — and it costs pennies on the dollar to run.

There is a number that keeps haunting the American AI industry: $5.6 million.

That is what DeepSeek reportedly spent to train one of its earlier models — a figure that, when it surfaced in January 2025, sent Nvidia’s stock into the largest single-day market cap wipeout in history: roughly $600 billion, gone in a session. Silicon Valley called it a fluke. An anomaly. A one-time shock from a scrappy Chinese startup that got lucky with clever engineering and cheap chips.

Then, on April 24, 2026, DeepSeek did it again.

The Hangzhou-based lab released a preview of DeepSeek V4 — two open-source models, V4-Pro and V4-Flash, that together represent the most aggressive cost-performance challenge to closed-source AI yet. V4-Pro carries 1.6 trillion total parameters, making it the largest open-weights model ever released. V4-Flash, its leaner sibling, packs 284 billion parameters into a package that costs less per token than most small models from US labs. Both ship with a one-million-token context window as the default, not a premium add-on.

This is not a fluke. This is a strategy.

What DeepSeek Actually Released

DeepSeek V4 is a Mixture-of-Experts (MoE) architecture, which means the model’s full parameter count is not the number that matters for cost. What matters is how many parameters are activated per token. V4-Pro activates 49 billion parameters per inference pass. V4-Flash activates 13 billion. For context, that is comparable to running a mid-size dense model — but with the knowledge base of something far larger.

The architectural headline is the Hybrid Attention mechanism, which combines Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA). The practical result: at a one-million-token context, V4-Pro requires only 27% of the inference compute and 10% of the KV cache memory compared to its predecessor, DeepSeek-V3.2. That is not an incremental improvement. That is a structural redesign of what long-context inference costs.

DeepSeek also introduced two training innovations worth noting. Manifold-Constrained Hyper-Connections (mHC) replace conventional residual connections to improve signal propagation stability across layers. The team also switched to the Muon Optimizer for faster convergence and greater training stability. The full model was pre-trained on over 32 trillion tokens.

Both models support three reasoning effort modes: Non-Think (fast, intuitive), Think High (deliberate, analytical), and Think Max (maximum reasoning budget). This gives operators granular control over the latency-cost-quality tradeoff at inference time — a meaningful operational advantage for teams running mixed workloads.

The Benchmarks: Where DeepSeek Wins, Where It Trails

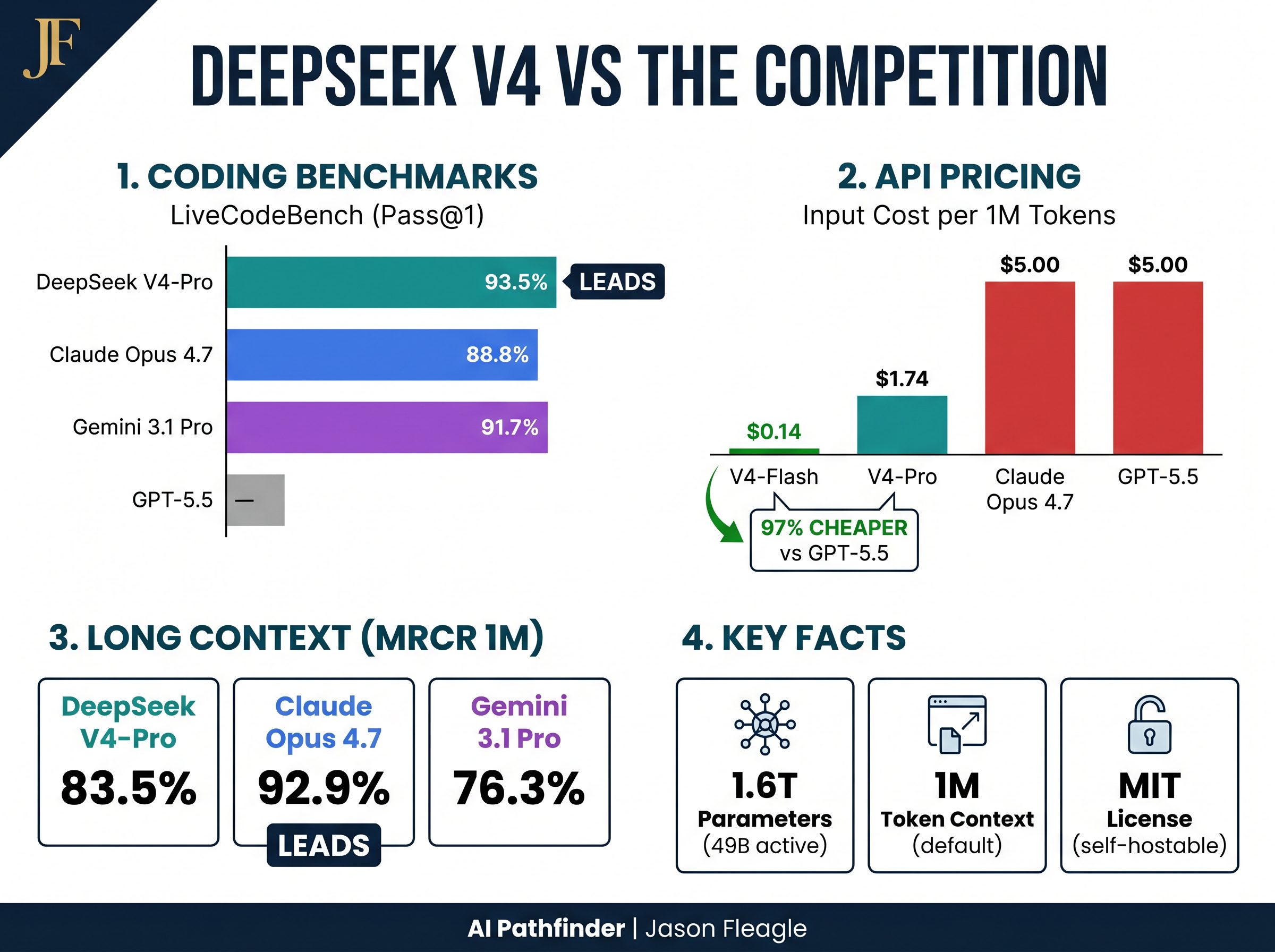

DeepSeek’s self-reported benchmarks for V4-Pro-Max — the maximum reasoning effort mode — show a model that is genuinely competitive at the frontier, with specific domains where it outright leads the field.

| Benchmark | DeepSeek V4-Pro Max | GPT-5.5 (xHigh) | Claude Opus 4.7 (Max) | Gemini 3.1 Pro (High) |

|---|---|---|---|---|

| LiveCodeBench (Coding) | 93.5% ★ LEADS | — | 88.8% | 91.7% |

| Codeforces Rating | 3,206 ★ LEADS | 3,168 | — | 3,052 |

| SWE-Bench Pro (GitHub Issues) | 55.4% | 57.7% | 64.3% ★ | 54.2% |

| Terminal-Bench 2.0 (Agentic) | 67.9% | 82.7% ★ | 69.4% | 68.5% |

| GPQA Diamond (Science) | 90.1% | 93.0% | 91.3% | 94.3% ★ |

| MMLU-Pro (Knowledge) | 87.5% | 87.5% | 89.1% | 91.0% ★ |

| MRCR 1M (Long Context) | 83.5% | — | 92.9% ★ | 76.3% |

| Apex Shortlist (Reasoning) | 90.2% ★ LEADS | 78.1% | 85.9% | 89.1% |

The pattern is clear. DeepSeek V4-Pro-Max leads the world in competitive coding — both on LiveCodeBench (93.5%) and Codeforces rating (3,206), beating GPT-5.5 and Gemini outright. It also leads on Apex Shortlist reasoning. Where it trails is in complex agentic task execution (Terminal-Bench 2.0), real-world software engineering (SWE-Bench Pro), and general scientific reasoning (GPQA Diamond), where GPT-5.5 and Gemini 3.1 Pro maintain meaningful leads.

The honest read: V4-Pro is a world-class coding and reasoning engine at a fraction of the cost of its closed-source rivals. It is not a drop-in replacement for GPT-5.5 in agentic workflows — yet. But for pure code generation, competitive programming, and long-document analysis, it is the best open-weights option available, full stop.

The Economics That Should Alarm Every US AI Provider

Here is the table that matters most for operators making infrastructure decisions right now:

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context Window | Open Weights? |

|---|---|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.28 | 1M tokens | Yes (MIT) |

| DeepSeek V4-Pro | $1.74 | $3.48 | 1M tokens | Yes (MIT) |

| GPT-5.5 | $5.00 | $30.00 | ~1M tokens | No |

| Claude Opus 4.7 | $5.00 | $25.00 | ~1M tokens | No |

V4-Flash costs $0.14 per million input tokens — less than GPT-5.4 Nano, OpenAI’s cheapest model. V4-Pro, at $1.74 input, is roughly 65% cheaper than GPT-5.5 on input and 88% cheaper on output. For teams running high-volume inference workloads — document processing, code review pipelines, RAG systems — those numbers are not marginal. They are existential for budget planning.

Because both models are released under the MIT License with open weights, enterprises with the infrastructure to self-host can drive their marginal cost of inference to near zero. V4-Pro is an 865GB download. V4-Flash is 160GB. For organizations with on-premise GPU clusters — or any team building on Llama or Mistral — the path to self-hosted frontier-class inference just got dramatically shorter.

“DeepSeek’s V4 preview is a serious flex — lower inference costs than previous models, with excellent agent capability at significantly lower cost.”

— Neil Shah, VP of Research, Counterpoint Research

The Distillation Controversy and the Geopolitical Undercurrent

DeepSeek’s V4 release does not exist in a vacuum. It arrives against a backdrop of escalating tension between the US government and Chinese AI development — and a specific, serious accusation from OpenAI.

In a memo to US lawmakers, OpenAI alleged that DeepSeek had been using a technique called distillation to train its models — essentially, using the outputs of OpenAI’s models (and those of other US AI companies) as training data to build a competing system. “We have observed accounts associated with DeepSeek employees developing methods to circumvent OpenAI’s access restrictions,” the memo stated, according to Reuters. Anthropic has raised similar concerns. The practice, if confirmed, would represent a systematic effort to extract the value of billions of dollars in US AI R&D investment without authorization.

DeepSeek has not publicly responded to the specific distillation allegations. The company’s official statement following V4’s release focused on technical achievements and reiterated its commitment to open-source development, noting: “We remain committed to longtermism, advancing steadily toward our ultimate goal of AGI.”

The chip question is equally unresolved. US export controls restrict Nvidia’s most advanced AI chips from reaching Chinese buyers, and it remains unclear which hardware was used to train V4. What is clear is that Huawei confirmed on the same day as V4’s release that its Ascend AI processors can support the new models — a signal that Beijing’s push for domestic chip sovereignty is gaining traction. Chinese contract chip manufacturers SMIC and Hua Hong Semiconductor surged 9% and 15%, respectively, on the news. Meanwhile, Chinese AI rivals MiniMax and Zhipu each fell around 8% as investors recalibrated around DeepSeek’s renewed dominance.

The data sovereignty question: DeepSeek’s API routes data through servers in China. For organizations subject to HIPAA, SOC 2, GDPR, or US federal data handling requirements, the API is not a viable option regardless of price. The self-hosted open-weights path is the only compliant route — and it requires meaningful infrastructure investment.

The Competitive Landscape Redraws Itself

DeepSeek V4 lands in a week that already saw GPT-5.5 (covered in AI Pathfinder Issue #52) and Claude Opus 4.7 ship to enterprise customers. The timing is not coincidental — it is a statement. The Chinese lab is signaling that it intends to compete at the frontier, on the same release cadence, at a fraction of the cost.

Morningstar senior equity analyst Ivan Su put it plainly: “Traders have already priced in the reality that Chinese AI is competitive and cheaper to use.” V4 is unlikely to produce the same market shock as R1 — the element of surprise is gone. But Su noted something more structurally significant: “DeepSeek’s latest positioning places other Chinese open-source models as direct competitors. This is a framing that didn’t exist with R1, and that alone tells you how much domestic competition has intensified.”

In other words, the story is no longer just China vs. the US. It is now China vs. China vs. the US — with DeepSeek, Alibaba’s Qwen series, ByteDance’s models, and a dozen other Chinese labs all competing for open-source dominance. That dynamic accelerates the pace of capability improvement and cost reduction for everyone, including US developers who build on open-weights models.

Counterpoint Research’s Wei Sun framed the chip angle as a long-term structural shift: “V4’s ability to run natively on local chips could have massive implications, helping Beijing achieve more AI sovereignty and further reduce reliance on Nvidia. This will ultimately speed up global AI developments as well.” (CNBC)

5 Immediate Use Cases for DeepSeek V4

1. High-Volume Code Review and Generation (V4-Pro)

V4-Pro leads the world on LiveCodeBench (93.5%) and Codeforces (3,206 rating). For teams running automated code review pipelines, PR summarization, or code generation at scale, V4-Pro delivers frontier-class coding performance at $1.74/M input — roughly one-third the cost of GPT-5.5 or Claude Opus 4.7. The API is fully compatible with OpenAI’s ChatCompletions format: simply swap in deepseek-v4-pro as your model parameter.

2. Long-Document Analysis and RAG Pipelines (V4-Pro or Flash)

The 1M-token context window is not a marketing number — V4-Pro scores 83.5% on MRCR 1M needle-in-a-haystack retrieval, outperforming Gemini 3.1 Pro on that benchmark. For legal document review, financial report analysis, or enterprise RAG systems ingesting large corpora, the combination of 1M context and low inference cost makes V4 a compelling infrastructure choice. V4-Flash at $0.14/M input is the most cost-effective option for high-throughput document processing pipelines.

3. Agentic Coding Workflows with Claude Code or OpenCode (V4-Pro)

DeepSeek has explicitly integrated V4 with Claude Code, OpenClaw, and OpenCode — the same agent frameworks that power many enterprise coding workflows. V4-Pro is already driving DeepSeek’s own in-house agentic coding infrastructure. For teams using these frameworks, V4-Pro is a viable and significantly cheaper backend model for tasks that do not require GPT-5.5’s Terminal-Bench-level agentic performance.

4. Multilingual Knowledge Work (V4-Pro)

V4-Pro scores 90.3% on MMMLU (multilingual MMLU) and 93.1% on C-Eval (Chinese language benchmark), making it the strongest open-source option for organizations operating in multilingual or Chinese-language environments. For global enterprises with Asia-Pacific operations, this is a meaningful capability gap that V4 closes relative to US-only models.

5. Self-Hosted Inference for Data-Sensitive Workloads (Open Weights)

For organizations that cannot route data through any third-party API — healthcare, defense, legal, financial services — V4-Flash’s 160GB open-weights download is the most capable self-hostable model available at that size. V4-Pro at 865GB is viable for organizations with on-premise A100 or H100 clusters. Both are released under the MIT License: no usage restrictions, no data sharing requirements, no vendor lock-in.

Your DeepSeek V4 Operator Action Plan

- Run a cost audit on your current AI API spend. Pull your last 30 days of token usage from OpenAI or Anthropic. Calculate what that same workload would cost on V4-Flash ($0.14/M input) and V4-Pro ($1.74/M input). If the delta is significant, that is your business case for a parallel test.

- Test V4-Pro on your highest-volume coding task. The API is live today. Update your model parameter to

deepseek-v4-pro— no other changes required if you are using OpenAI’s ChatCompletions format. Run your standard code generation or review prompt and benchmark the output quality against your current model. - Evaluate your data sovereignty posture before routing any sensitive data. If your data is subject to HIPAA, GDPR, SOC 2, or federal compliance requirements, the DeepSeek API is not a viable path. Evaluate the self-hosted open-weights option and assess your infrastructure requirements before making any production decisions.

Frequently Asked Questions

Is DeepSeek V4 better than GPT-5.5?

It depends on the task. DeepSeek V4-Pro-Max leads GPT-5.5 in competitive coding (LiveCodeBench 93.5% vs. no published score) and Codeforces rating (3,206 vs. 3,168). However, GPT-5.5 leads significantly on agentic task execution (Terminal-Bench 2.0: 82.7% vs. 67.9%) and science reasoning. For coding-heavy workloads, V4-Pro is the better value. For complex multi-step agentic workflows, GPT-5.5 currently holds the edge. Read our full GPT-5.5 breakdown in AI Pathfinder Issue #52.

Is DeepSeek V4 safe to use for enterprise data?

The DeepSeek API routes data through servers in China, which creates compliance risks for organizations subject to HIPAA, GDPR, SOC 2, or US federal data handling requirements. The self-hosted open-weights version (MIT License) eliminates this concern, but requires significant on-premise GPU infrastructure. Organizations should conduct a formal data sovereignty review before routing any sensitive data through the DeepSeek API.

How much does DeepSeek V4 cost?

V4-Flash costs $0.14 per million input tokens and $0.28 per million output tokens — making it cheaper than GPT-5.4 Nano. V4-Pro costs $1.74 per million input tokens and $3.48 per million output tokens, compared to $5.00/$30.00 for GPT-5.5. Both models are also available as open weights under the MIT License at no API cost if self-hosted. See the official DeepSeek pricing page for the latest rates.

What is the distillation controversy with DeepSeek?

OpenAI has accused DeepSeek of using “distillation” — a technique where outputs from one AI model are used as training data to build another. In a memo to US lawmakers, OpenAI stated it had “observed accounts associated with DeepSeek employees developing methods to circumvent OpenAI’s access restrictions.” Reuters reported on the allegations in February 2026. DeepSeek has not publicly responded to the specific claims.

How do I access DeepSeek V4 today?

You can access DeepSeek V4 via three channels: (1) the web interface at chat.deepseek.com via Instant Mode or Expert Mode; (2) the API by updating your model parameter to deepseek-v4-pro or deepseek-v4-flash — compatible with both OpenAI ChatCompletions and Anthropic API formats; or (3) open weights via Hugging Face under the MIT License. Note: legacy deepseek-chat and deepseek-reasoner models retire on July 24, 2026.

The Closing Question

A year ago, DeepSeek R1 forced a reckoning with a simple question: does the most expensive model win? The answer, it turned out, was no — not always, and not by as much as the US industry had assumed.

DeepSeek V4 asks a harder question: Are you willing to route your enterprise data through a Chinese open-source model if it cuts your API bill by 80%? For some organizations, the answer is yes, with appropriate risk management. For others, the answer is no, full stop. But the fact that the question is now serious — that it requires a deliberate, documented answer from your legal, security, and engineering teams — is itself the story.

The era of American AI exceptionalism by default is over. What replaces it is a more competitive, more complex, and ultimately more interesting landscape for everyone building on these systems.

Let me know in the comments: how are you thinking about DeepSeek V4 in your stack?

References

- DeepSeek. (2026, April 24). DeepSeek V4 Preview Release. DeepSeek API Docs.

- DeepSeek AI. (2026). DeepSeek-V4-Flash Model Card. Hugging Face.

- CNBC. (2026, April 24). China’s DeepSeek releases preview of long-awaited V4 model as AI race intensifies.

- DataCamp. (2026). DeepSeek V4: Features, Benchmarks, and Comparisons.

- Bloomberg. (2026, April 24). DeepSeek Unveils Newest Flagship a Year After AI Breakthrough.

- Reuters. (2026, February 12). OpenAI Accuses DeepSeek of Distilling US Models.

- Fleagle, J. (2026, April 24). DeepSeek V4: The $5.6M Model That Just Put Silicon Valley on Notice (Again). LinkedIn Pulse.

About Jason Fleagle

Jason Fleagle is the Head of AI for Netsync and an AI and Growth Consultant working with global brands to help with their successful AI adoption and management. He helps humanize data — so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 500 AI projects and tools, and frequently conducts training workshops to help companies understand and adopt AI.