In the race to adopt AI, it’s easy to get caught up in the hype of new models and futuristic use cases. But for organizations that handle sensitive data—like a large public healthcare system serving a major metropolitan region—the stakes are infinitely higher. The real challenge isn’t just what you can do with AI, but how you can do it responsibly.

This was the exact situation for one of our recent clients. As a safety-net provider for over 70,000 low-income and uninsured residents, this healthcare system saw a massive opportunity to leverage AI to improve clinical access, operational efficiency, and health equity. But they also faced a critical roadblock: how to unleash the power of AI without unleashing chaos?

They were grappling with challenges that are becoming increasingly common:

- Shadow AI: Unrestricted use of tools like ChatGPT by staff, creating massive security and privacy risks.

- HIPAA Compliance: The need to ensure every AI application and data transfer process was fully compliant with patient privacy regulations.

- Data Control: A lack of clear best practices for managing sensitive patient and hospital data across a complex network of clinics and community programs.

Before they could even begin to implement high-impact AI use cases, they needed a plan. They needed a Responsible AI Governance Framework.

Why AI Governance is No Longer Optional

The challenges this healthcare system faced are not unique. Across industries, organizations are grappling with the dual pressures of AI-driven innovation and the immense risks that come with it. The reality is, in 2025, AI governance is no longer a forward-thinking ideal—it is a fundamental business imperative. The data paints a stark picture of a landscape fraught with risk for those who fail to act.

The High Cost of Inaction: A Minefield of Risk

Without a robust governance framework, organizations are navigating a minefield of legal, financial, and reputational risks. The consequences of inaction are not abstract; they are tangible, costly, and increasingly common.

Shadow AI: The Hidden Threat Within

The rise of “shadow AI”—the unsanctioned use of AI tools by employees—has created an unprecedented security and compliance crisis. A 2025 report from Menlo Security revealed a staggering 68% surge in shadow AI usage, with 57% of employees admitting to inputting sensitive data into free-tier AI tools like ChatGPT. This translates to a massive, uncontrolled data exodus, with one report logging over 155,000 copy and 313,000 paste attempts into these tools in a single month.

For healthcare organizations, the threat is existential. The use of non-compliant tools like ChatGPT with Protected Health Information (PHI) constitutes an automatic HIPAA violation. As one expert from the USC Price School noted, “Once you enter something into ChatGPT, it is on OpenAI servers and they are not HIPAA compliant. That’s the real issue.”

Real-World Failures: When Governance is an Afterthought

The headlines are filled with cautionary tales of AI governance failures:

- Algorithmic Bias: A major bank faced a PR nightmare and legal action after its AI-driven credit approval system was found to be giving women lower credit limits than men, a direct result of training on biased historical data.

- Privacy Violations: Paramount was hit with a $5 million lawsuit for sharing subscriber data without proper consent through its AI-powered recommendation engines.

- Healthcare Misdiagnosis: In a stark example of data quality risks, an AI system trained primarily on a pediatric population performed poorly when applied to a more diverse patient population, leading to misdiagnosis and inappropriate treatment recommendations.

These examples underscore a critical truth: without a framework for fairness, accountability, and transparency, AI systems can and will fail, with devastating consequences.

The Staggering ROI of Getting it Right

Conversely, the business case for investing in AI governance is stronger than ever. It is not merely a defensive measure; it is a strategic enabler of innovation and a driver of competitive advantage. Research from IBM highlights a holistic ROI framework for AI ethics, encompassing not just economic returns but also reputational benefits and enhanced organizational capabilities.

Organizations with mature AI governance programs report faster deployments, fewer risks, and stronger stakeholder buy-in, resulting in clear financial ROI. As Reggie Townsend, Director and VP of the Data Ethics Practice at SAS, aptly puts it, “Our work has to not just contribute to the mission of the organization—but also has to contribute to the profit margin of the organization. Otherwise, it comes across as a charity, and charity doesn’t get funded for very long.”

The message is clear: in the age of AI, governance is not a cost center; it is a strategic investment in trust, resilience, and long-term value creation. The question is no longer if you need AI governance, but how quickly you can implement it.

The OnStak 3-Phase Blueprint for AI Governance

To address this, we deployed our proven 3-phase methodology, a 25-week engagement designed to build a rock-solid foundation for secure, compliant, and production-ready AI.

This isn’t just about writing policies; it’s about creating a living, breathing ecosystem for responsible innovation.

Phase 1: Assessment – Establishing the Baseline

The first step is to create a clear, organization-wide picture of AI maturity. You can’t build a roadmap if you don’t know where you’re starting from.

- Activities: We conducted AI readiness assessments across people, processes, and technology; inventoried all existing AI tools and pilots; and facilitated workshops with stakeholders from clinical to operational domains.

- Key Deliverables: A prioritized portfolio of AI use cases, a cultural and organizational readiness assessment, and a foundational governance toolkit and roadmap.

Phase 2: Planning – Designing the Governance Ecosystem

With the baseline established, we designed a governance framework tailored to the healthcare system’s unique mission and regulatory environment.

- Activities: We defined governance structures and ethical guidelines, designed an AI Center of Excellence (CoE) to serve as the long-term engine for execution, and created detailed implementation plans for prioritized use cases.

- Key Deliverables: A full AI Governance Framework and Strategic Roadmap, an AI CoE Operating Model, and clear Policy, Risk, and Compliance Standards.

Phase 3: Execution – Operationalizing Governance and Proving Value

The final phase is about translating strategy into tangible results. We implemented high-impact proofs of concept (PoCs) to validate the framework and demonstrate measurable value from day one.

- Activities: We deployed operational PoCs, implemented governance tools for policy enforcement, and conducted training to build internal AI fluency.

- Key Deliverables: A Final Governance Playbook, a Training & Enablement Program, and a Long-Term Sustainability and Scalability Plan to ensure the organization can continue its AI journey with confidence.

Is Your Organization Ready for AI?

Navigating the Landscape of AI Governance Frameworks

For organizations ready to build their own governance strategy, the good news is that you don’t have to start from scratch. A number of robust frameworks have emerged to provide guidance, though they vary in scope and legal authority. Understanding this landscape is key to selecting and tailoring the right approach for your organization.

These frameworks, while different, share a common set of core principles: fairness, accountability, explainability, security, and compliance. The key is not to adopt one wholesale, but to leverage them as a toolkit to build a governance structure that is tailored to your specific industry, regulatory environment, and organizational culture.

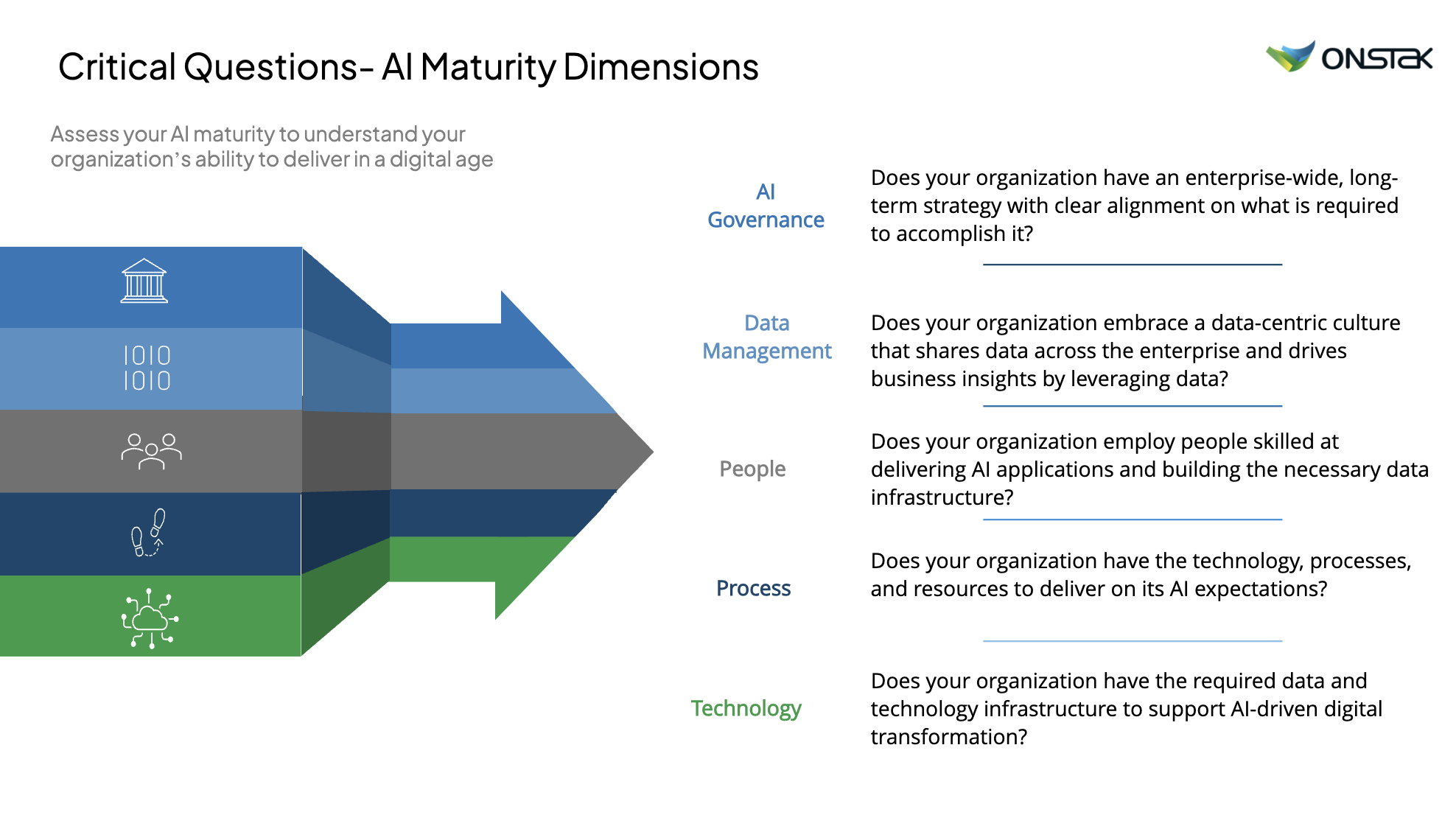

This framework provides a repeatable model for any organization looking to move beyond AI hype and into real-world implementation. As you consider your own AI journey, ask yourself these critical questions across the five dimensions of AI maturity:

- AI Governance: Does your organization have an enterprise-wide, long-term strategy with clear alignment on what is required to accomplish it?

- Data Management: Does your organization embrace a data-centric culture that shares data across the enterprise and drives business insights?

- People: Does your organization employ people skilled at delivering AI applications and building the necessary data infrastructure?

- Process: Does your organization have the technology, processes, and resources to deliver on its AI expectations?

- Technology: Does your organization have the required data and technology infrastructure to support AI-driven digital transformation?

Answering these questions honestly is the first step toward building a responsible AI foundation. This healthcare system is now on a clear path to not only implementing innovative AI solutions but doing so in a way that builds patient trust, ensures compliance, and delivers on its core public health mission. It’s a powerful reminder that in the age of AI, the most important innovations aren’t just the tools themselves, but the frameworks we build to guide them.

If you want a helpful data sheet on how OnStak can help you integrate a Responsible AI Governance framework click here.

Have any other questions about AI Governance framework? Let us know in the comments.

About OnStak

OnStak specializes in comprehensive AI implementation across four core expertise areas: AI/Data for intelligent knowledge management, AI/Edge for distributed operational intelligence, AI/Performance for optimized system efficiency, and AI/Migrations for seamless technology integration. Our proven methodology helps manufacturing leaders achieve operational transformation while maximizing return on investment.

Here’s a few recent AI projects we’ve delivered:

- Case Study: Cricket Sports Team Uses AI to Gain An Advantage

- Case Study: Transforming Mental Healthcare With AI

- Case Study: ARI AI Chatbot Helps Military Veterans Community

- Case Study: AI Helps Healthcare Professionals Roleplay Patient Care

- Case Study: AI Document Processing for Real Estate Investment

About Jason Fleagle

Jason Fleagle is the Chief AI Architect at OnStak, and is also a writer, entrepreneur, and consultant specializing in tech, AI, and growth. He helps humanize data—so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 150 AI applications, and frequently conducts training workshops to help companies understand and adopt AI. With a strong background in digital marketing, content strategy, and technology, he combines technical expertise with business acumen to create scalable solutions. He is also a content creator, producing videos, workshops, and thought leadership on AI, entrepreneurship, and growth. He continues to explore ways to leverage AI for good and improve human-to-human connections while balancing family, business, and creative pursuits.

Looking for AI Growth?

Let’s Talk About Your AI Goals!

What would you do if you could determine the top AI use cases or opportunities for you and your team?

We can help you go from surviving to thriving – with done-for-you business growth implementations.