Hey everyone,

Something happened that I honestly didn’t see coming. After five years of keeping their most powerful AI technology locked away behind APIs and subscription walls, OpenAI did the unthinkable.

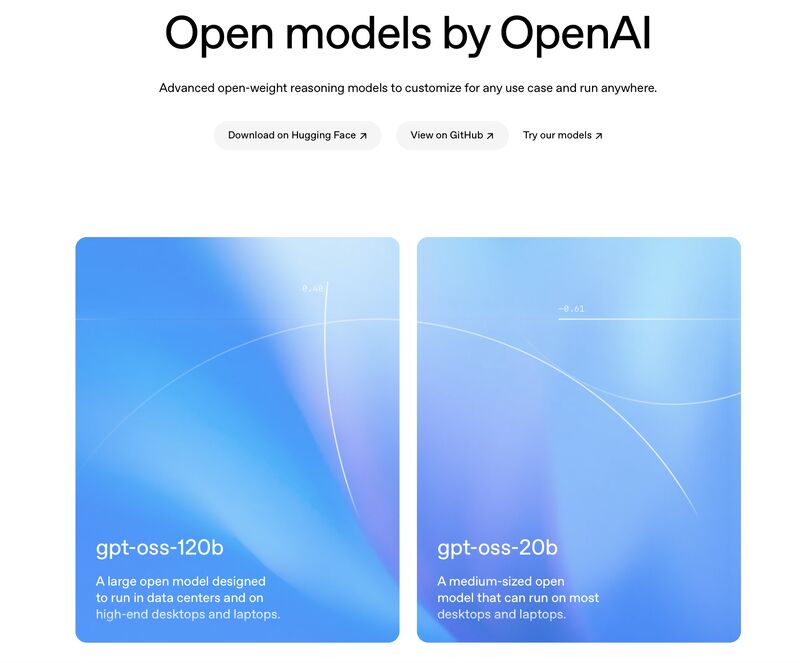

OpenAI just released GPT-OSS—their first open-weight models since GPT-2 back in 2019. This isn’t just another product launch or incremental update. This is a complete strategic reversal that fundamentally changes the AI landscape for businesses of every size.

And if you’re a business leader trying to figure out how AI fits into your strategy, this changes everything.

The Move Nobody Saw Coming

For the past five years, OpenAI has been the poster child for the “closed AI” approach. Want to use GPT-4? Pay per API call. Need enterprise features? Subscribe to ChatGPT Plus or Enterprise. Want to customize the model for your specific use case? Too bad—take what we give you or build your own.

This strategy made sense from a business perspective. Why give away your competitive advantage when you can monetize it? Why open-source your billion-dollar research when you can charge premium prices for access?

But yesterday, OpenAI flipped the script entirely.

They released not one, but two powerful open-weight models that you can download, modify, and use commercially without paying a single royalty. We’re talking about enterprise-level AI that can now run on your own hardware, under your complete control.

The GPT-OSS-120b packs 117 billion parameters and delivers performance comparable to their own o4-mini model. The GPT-OSS-20b, with 21 billion parameters, can run on a laptop with just 16 GB of memory.

Think about that for a moment. AI reasoning power that was previously locked behind expensive enterprise subscriptions is now sitting on GitHub, free for anyone to download and use.

Why This Matters More Than You Think

As someone who’s been helping businesses navigate AI implementation for years, I can tell you that this release solves three massive problems that have been holding back AI adoption:

The Cost Problem: Until now, serious AI capabilities required ongoing subscription costs that could quickly spiral out of control. A mid-sized business using AI for customer service, content creation, and data analysis could easily spend $10,000+ per month on API calls. Now? You can run equivalent capabilities on your own hardware for the cost of electricity.

The Control Problem: When you’re dependent on external APIs, you’re at the mercy of rate limits, service outages, and policy changes. Your AI-powered business processes can be disrupted by factors completely outside your control. With open-weight models, you own the entire stack.

The Privacy Problem: Many businesses, especially in regulated industries, simply can’t send sensitive data to external AI services. Open-weight models that run locally solve this completely—your data never leaves your infrastructure.

But here’s what really excites me about this release: it’s not just about solving problems. It’s about unlocking possibilities that weren’t feasible before.

The Strategic Implications

This move by OpenAI isn’t just about technology—it’s about market strategy, and it reveals something fascinating about where the AI industry is heading.

For years, the conventional wisdom was that AI would be dominated by a few large companies with the resources to train massive models. The barrier to entry was simply too high for smaller players to compete.

OpenAI’s release suggests they believe something different: that the future belongs to those who can build the best applications on top of AI, not just those who can hoard the best models.

This is a classic platform strategy. By releasing these models, OpenAI is essentially saying, “We want to be the foundation that everyone builds on, not the gatekeeper that everyone pays.”

It’s brilliant, actually.

Instead of competing solely on model performance, they’re competing on ecosystem value. They want developers, entrepreneurs, and businesses to build their AI solutions on OpenAI’s foundation, creating a network effect that becomes increasingly difficult for competitors to challenge.

What This Means for Your Business

If you’re a business leader who’s been watching AI from the sidelines, waiting for the right moment to jump in, this might be it.

The democratization of AI just accelerated dramatically. Capabilities that were previously available only to tech giants with massive budgets are now accessible to any business with a decent laptop and some technical know-how.

Here’s what becomes possible:

Custom AI Assistants: You can now build AI assistants specifically trained on your business processes, industry knowledge, and company data—without sending any of that information to external services.

Automated Reasoning Systems: Complex business decisions that previously required human analysis can now be automated using AI that runs entirely on your infrastructure.

AI-Powered Products: If you’re building software products, you can now embed sophisticated AI capabilities without worrying about ongoing API costs eating into your margins.

Edge AI Applications: The smaller 20B model can run on edge devices, opening up possibilities for AI applications in manufacturing, retail, healthcare, and other industries where local processing is critical.

But here’s the key insight: this isn’t just about cost savings or technical capabilities. It’s about strategic independence.

When your AI capabilities are dependent on external services, you’re essentially renting your competitive advantage. You’re subject to price increases, service changes, and the strategic decisions of companies whose interests may not align with yours.

With open-weight models, you own your AI capabilities. You can modify them, optimize them for your specific use cases, and build sustainable competitive advantages that can’t be taken away by a vendor’s policy change.

The OnStak Perspective

At OnStak, we’ve been helping businesses navigate AI implementation across four key areas: AI/Data for traditional data scenarios, AI/Edge for IoT and edge computing, AI/Performance for system optimization, and AI/Migrations for cloud and on-premises transitions.

This OpenAI release is particularly exciting for our AI/Edge and AI/Performance work. The ability to run sophisticated AI models locally opens up entirely new possibilities for edge computing applications and performance optimization scenarios.

For businesses in regulated industries, this could be transformational. We can now implement AI solutions that provide enterprise-level capabilities while maintaining complete data sovereignty and compliance control.

The AI/Migration implications are equally significant. Organizations that have been hesitant to move AI workloads to the cloud due to cost or control concerns now have viable alternatives for on-premises AI deployment.

The Practical Reality Check

Now, before you get too excited, let’s talk about the practical realities of implementing open-weight models in your business.

First, these models still require significant computational resources. The 120B model needs an 80 GB GPU to run effectively. The 20B model, while more accessible, still requires 16 GB of memory and decent processing power.

Second, implementing and maintaining these models requires technical expertise. You can’t just download them and expect them to work out of the box for your specific business needs. You need people who understand AI model deployment, optimization, and maintenance.

Third, while the models are free, the infrastructure to run them isn’t. You’ll need to factor in hardware costs, energy consumption, and ongoing maintenance when calculating your total cost of ownership.

But here’s the thing: even with these considerations, the economics are compelling for many businesses. The break-even point for running your own AI infrastructure versus paying for external services is lower than most people think, especially for businesses with consistent, high-volume AI usage.

Your Strategic Options

If you’re considering how to leverage this development, you have several strategic options:

The DIY Approach: Download the models, set up your own infrastructure, and build custom AI solutions tailored to your specific needs. This gives you maximum control and customization but requires significant technical investment.

The Hybrid Approach: Use open-weight models for sensitive or high-volume applications while continuing to use external services for less critical use cases. This balances control with convenience.

The Partner Approach: Work with AI implementation partners like us at OnStak who can help you deploy and manage open-weight models without building internal expertise. This gives you the benefits of ownership without the complexity of self-management. We can even do the ongoing managed services for you.

The Wait-and-See Approach: Monitor how the ecosystem develops around these models before making significant investments. This minimizes risk but potentially delays competitive advantages.

The right choice depends on your business needs, technical capabilities, and strategic objectives. But the key is understanding that you now have choices that didn’t exist before.

The Competitive Landscape Shift

This release also signals a broader shift in the competitive AI landscape. OpenAI is essentially forcing other AI companies to respond. Will Google release open-weight versions of Gemini? Will Anthropic follow suit with Claude? Will Meta accelerate their Llama development?

The pressure is now on every AI company to justify why their models should remain closed when comparable capabilities are available for free.

This is good news for businesses because it means more options, better pricing, and accelerated innovation across the entire AI ecosystem.

Looking Forward

The release of GPT-OSS represents more than just new AI models—it represents a fundamental shift toward AI democratization. The barriers to accessing sophisticated AI capabilities just dropped dramatically.

For business leaders, this creates both opportunities and challenges. The opportunity is clear: you can now access enterprise-level AI capabilities without enterprise-level budgets. The challenge is figuring out how to leverage these capabilities strategically and sustainably.

The businesses that succeed in this new landscape won’t necessarily be those with the biggest AI budgets. They’ll be the ones that can most effectively integrate AI into their core business processes, create genuine value for their customers, and build sustainable competitive advantages.

The AI revolution just became accessible to everyone. The question isn’t whether you can afford AI anymore—it’s what you’re going to build with it.

At OnStak, we’re excited to help businesses navigate this new landscape. Whether you’re looking to implement AI/Data solutions, explore AI/Edge applications, optimize AI/Performance, or plan AI/Migrations, we have the expertise to help you leverage these new capabilities strategically and successfully.

The democratization of AI has arrived. Are you ready to capitalize on it?

What’s your take on OpenAI’s strategic shift? Are you considering implementing open-weight models in your business? Share your thoughts and challenges in the comments below—let’s discuss how this changes the game for businesses of all sizes.

Looking for AI Growth?

Let’s Talk About Your AI Goals!

What would you do if you could determine the top AI use cases or opportunities for you and your team?

We can help you go from surviving to thriving – with done-for-you business growth implementations.

You can learn more about our top AI case studies here on our website.

Learn more about my AI resources here on my youtube channel.

And check out my AI online course.