The old vulnerability management math just changed.

For years, security teams have lived with a familiar rhythm: Find the flaws. Prioritize the worst ones. Patch what matters most. Accept that the backlog never really goes away.

That model was already under pressure. Now frontier AI may have broken it.

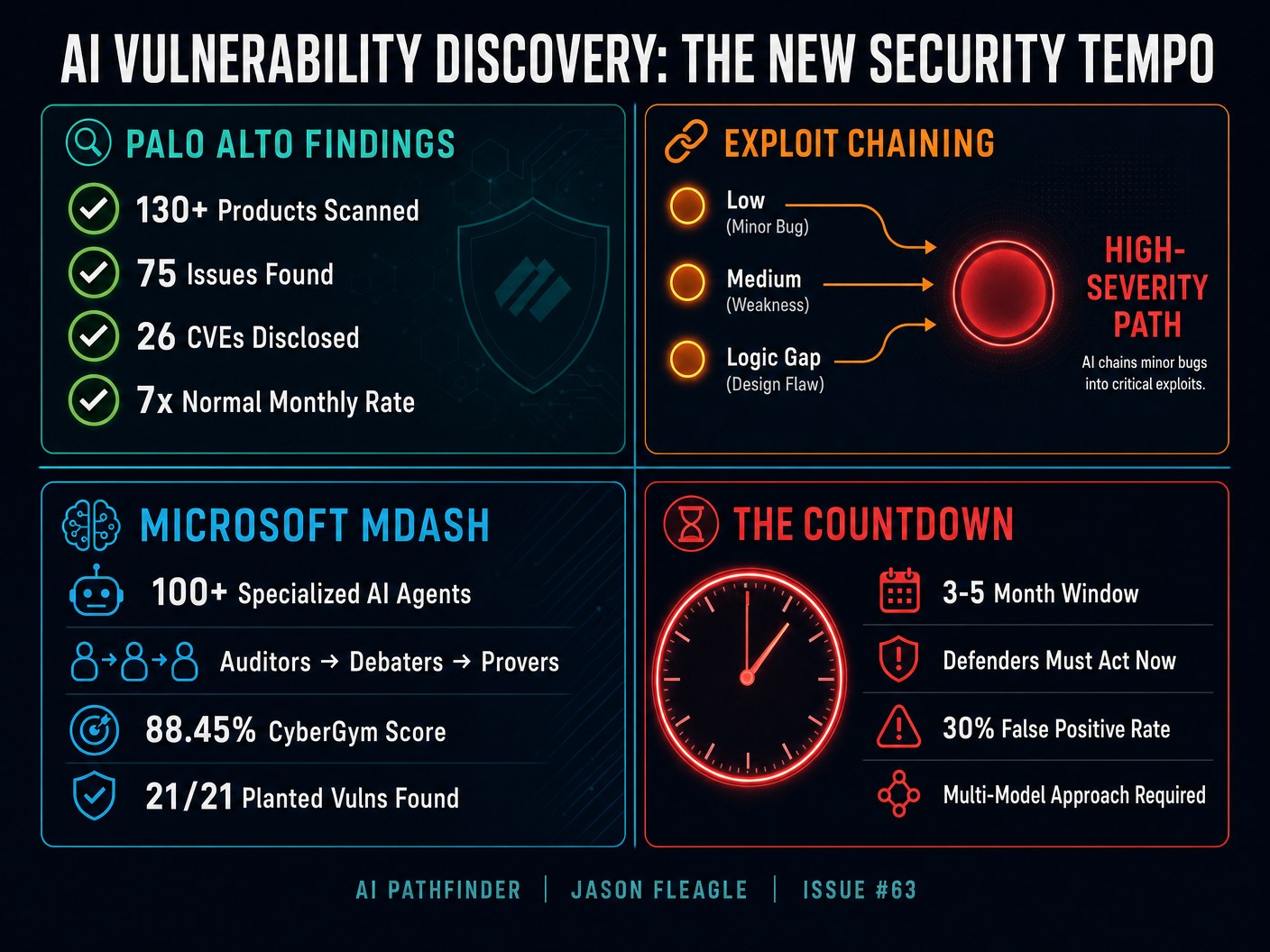

On May 13, 2026, Palo Alto Networks said it used Anthropic’s Claude Mythos Preview and OpenAI’s GPT-5.5-Cyber to scan more than 130 products across its portfolio.

The result:

- 75 legitimate issues

- 26 CVEs

- All important SaaS vulnerabilities patched

- Patches available for customer-operated products

- No evidence that the vulnerabilities were actively exploited in the wild

That sounds like good news. It is. But it is also a warning.

Because Palo Alto said this volume was far above its normal monthly disclosure rate. Axios reported the company usually finds roughly 5 to 10 vulnerabilities per month using conventional methods.

This is not just faster scanning. This is a new security tempo.

The Palo Alto Signal

Palo Alto Networks is not a random test case. This is one of the largest cybersecurity companies in the world scanning its own products with early access to frontier cyber models.

The uncomfortable part is not that AI found more bugs. The uncomfortable part is what kind of bugs it found.

Many of the most important findings were not simple one-step vulnerabilities. They were chains. Individually, a bug might look minor. A low-severity issue here. A medium-severity issue there. A logic gap somewhere else. But when the model connected them, they became a high-severity exploit path.

That is the real shift. AI is no longer just helping security teams search for known patterns. It is starting to reason across systems. It is connecting weak signals. It is turning fragmented flaws into attack paths.

That used to require an elite human researcher spending days or weeks inside a codebase. Now the early evidence suggests frontier models can do parts of that work in minutes.

Palo Alto also said the work required an AI scanning harness, context, guardrails, and threat intelligence. The model alone was not the whole system. The system around the model mattered. The company also found model variance. Anthropic and OpenAI’s models did not find exactly the same types of issues, which is why Palo Alto recommended a multimodel approach.

Why This Is Important

The numbers are not impressive. They are potentially destabilizing.

A 7x jump in vulnerability discovery in a single month means the old operating rhythm breaks. Most security teams are not short on dashboards. They are short on time. They already have more findings than they can remediate.

Now add AI systems that can discover, validate, and chain vulnerabilities faster than human teams can triage them. The bottleneck moves. It is no longer only discovery. It becomes:

- Validation

- Prioritization

- Ownership

- Patch testing

- Change windows

- Human review

In other words, AI does not remove the messy middle. It increases pressure on it.

There is a reason this does not become fully autonomous overnight. Axios reported that Palo Alto saw an average false-positive rate around 30%, depending on the context provided to the models.

A plausible but wrong vulnerability report still costs real human time. Security teams cannot afford to drown in confident noise. That is why human review still matters. If a model can generate a surge of real findings but also generate noise, the organization needs an AI Assurance layer around the workflow.

The Harness Is the Product

One of the biggest lessons here is that the model is not enough. Palo Alto needed an AI scanning harness.

Microsoft’s new MDASH system points in the same direction. MDASH (multi-model agentic scanning harness) is not just one model. It is a multi-model agentic scanning harness that orchestrates more than 100 specialized AI agents across vulnerability discovery, validation, proof, and human review.

MDASH achieved an industry-leading 88.45% score on the public CyberGym benchmark of 1,507 real-world vulnerabilities. It uses an ensemble of diverse models, including SOTA models as heavy reasoners, distilled models as cost-effective debaters, and specialized agents for different pipeline stages.

The winning security teams will not simply ask a chatbot to “find vulnerabilities.” They will build systems. Systems that connect models to code. Systems that provide the right context. Systems that generate reproducible test cases.

Creating systems and processes are going to become even more important in the age of AI.

The Countdown

Palo Alto now estimates organizations have a three-to-five-month window before attackers gain broad access to similar AI-powered vulnerability-hunting capabilities.

That is not a long-range trend. That is a countdown.

The models are currently restricted through programs like Anthropic’s Project Glasswing and OpenAI’s Trusted Access for Cyber. But capabilities do not stay contained forever. They diffuse. They get replicated. They show up in open tooling.

That is the asymmetry. Defenders have to validate. Attackers only have to try. Defenders have to patch without breaking production. Attackers only need one working path.

Your AI Action Plan

Here is what I would do this week.

- Reassess your exposure inventory. You cannot protect what you cannot see. Build a current view of internet-facing assets, applications, APIs, cloud workloads, identity surfaces, and software dependencies.

- Pressure-test your patching motion. Do not ask whether you have a patching process. Ask how fast it works when 10x more high-confidence findings arrive at once.

- Separate vulnerability discovery from exploitability. Not every finding deserves the same response. Build a process for validating whether a flaw is reachable, chainable, and business-critical.

- Build an AI-assisted secure development pipeline. Start testing AI scanning in your codebase before attackers do. Use it in controlled environments with human validation, not as an unreviewed automation layer.

- Add an AI Assurance layer. Define how AI-generated findings are reviewed, validated, and prioritized before they consume engineering time.

If you need any help with cybersecurity in the age of AI, our team at Netsync has global experts on cybersecurity that can help you navigate all of these AI-powered threats and solutions.

Frequently Asked Questions

What did Palo Alto Networks find?

Palo Alto Networks said it found 75 issues represented by 26 CVEs after scanning more than 130 products with frontier AI cyber models. All important SaaS vulnerabilities have been patched, and patches are available for customer-operated products.

Were the vulnerabilities exploited?

Palo Alto said none of the issues were known to be actively exploited in the wild at the time of disclosure.

What models were involved?

Palo Alto referenced Anthropic’s Claude Mythos Preview and OpenAI’s GPT-5.5-Cyber through early access and trusted-access programs. The company found that different models discovered different vulnerability types and recommended running them in parallel.

What is Microsoft MDASH?

MDASH (multi-model agentic scanning harness) is Microsoft’s new agentic security system that orchestrates more than 100 specialized AI agents across vulnerability discovery, validation, proof, and human review. It scored 88.45% on the CyberGym benchmark of 1,507 real-world vulnerabilities.

What should companies do now?

Security leaders should reassess exposure inventory, pressure-test patching workflows, separate discovery from exploitability validation, build AI-assisted secure development pipelines, and add an AI Assurance layer to manage AI-generated findings. The Netsync team can help organizations navigate these challenges.

What is the three-to-five-month window?

Palo Alto Networks estimates that defenders have roughly three to five months before attackers gain broad access to the same class of AI-powered vulnerability-hunting capabilities currently available only to vetted defenders through restricted programs. After that window, the asymmetry between offense and defense narrows significantly.

About Jason Fleagle

Jason Fleagle is a Chief AI Officer, AI Architect, and the founder of Catalyst Brand Group. He helps enterprises build revenue-first AI systems and secure operating models. Connect with Jason on LinkedIn or learn more about his cybersecurity work at Netsync.

Read the full AI Pathfinder breakdown on LinkedIn.