The question almost every enterprise I talk to is quietly wrestling with right now is this: At what point does running your own on-prem AI actually make sense? What is the cost comparison for on-prem vs on-cloud infrastructure?

For the last 18 months, the default answer has been simple: use an API. It’s fast, it’s easy, and you don’t have to think about GPUs. But the ground is shifting under our feet. A new research paper, “A Cost-Benefit Analysis of On-Premise Large Language Model Deployment,” just put hard numbers to what many of us have been feeling in our gut: the economics of AI have fundamentally changed.

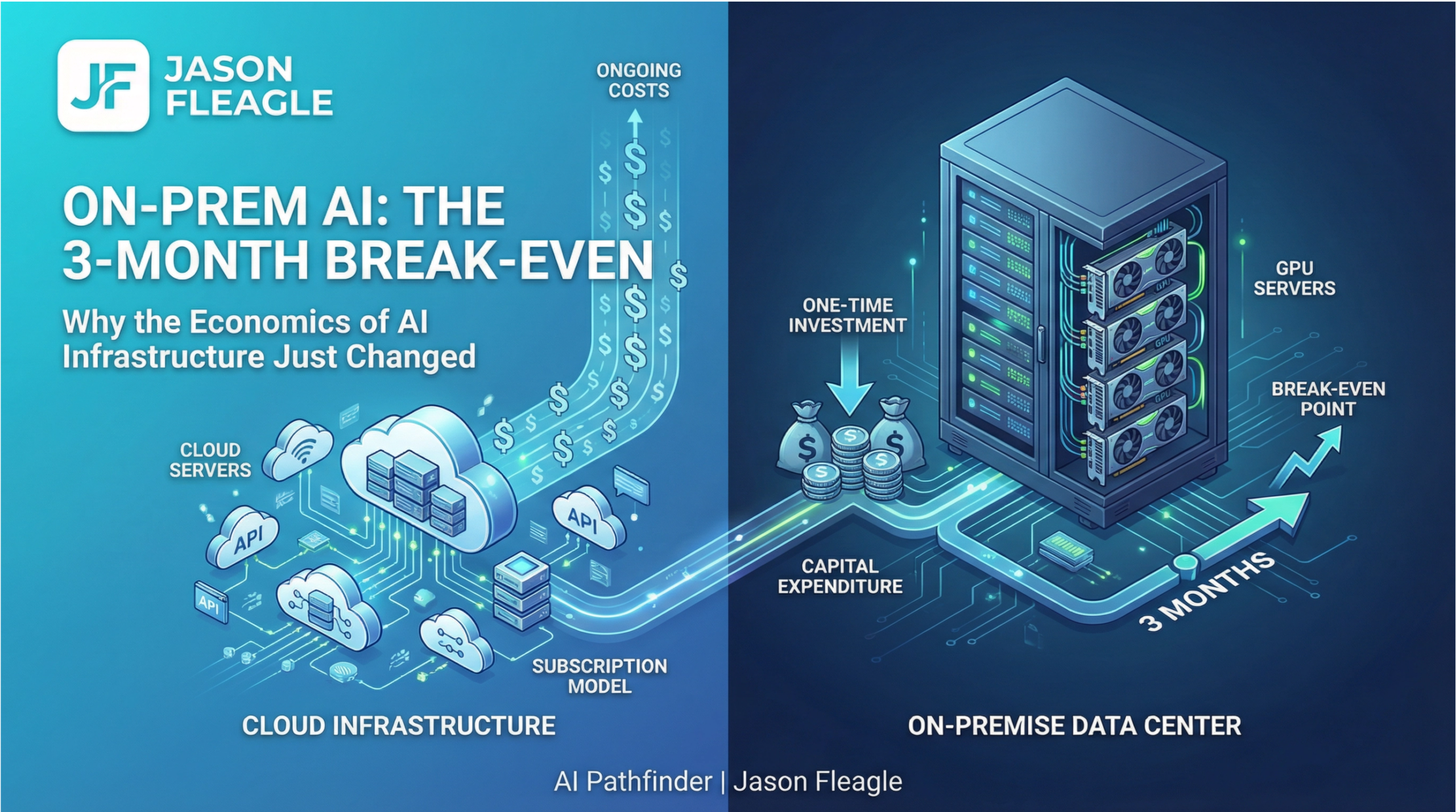

What Happened: The 3-Month Break-Even Point

Researchers from Carnegie Mellon University did the math that every CFO and executive leader has been waiting for. They analyzed 54 different deployment scenarios, pitting open-source models like Llama and Qwen running on local hardware against commercial API subscriptions from OpenAI, Anthropic, and Google.

The results are very interesting. For small to mid-sized models—the very kind that handle the bulk of real-world enterprise tasks—the break-even point for on-premise deployment can be as short as three months.

Let’s be clear about what this means. The argument that cloud APIs are always cheaper is officially dead. For a significant portion of enterprise workloads, the cost of running your own models on your own hardware is not just competitive; it’s a compelling financial strategy.

Here’s the breakdown from the paper:

- Small Models (≤30B params): Break-even in just a few months against premium API pricing.

- Mid-Sized Models (70B–120B params): Typically land in the 6–24 month ROI window. This is the sweet spot for most enterprise use cases.

- Large Models (>200B params): Only make sense for sustained, high-volume workloads or strict data residency requirements, with break-even points often exceeding two years.

Why This Is a Board-Level Issue, Not an IT Decision

This stopped being an AI model decision a while ago. It’s an infrastructure decision. Once open-source models started closing the performance gap with their commercial counterparts, the real questions became a lot more familiar to anyone who has ever run a data center.

1. The API “Tax” is Now Quantifiable.

We now have a clear framework for calculating the premium you pay for the convenience of an API. For many companies, that convenience is worth it. But for others with steady, predictable workloads, you are essentially paying a perpetual tax for a service you could own outright. The conversation has shifted from “Can we afford to build it?” to “Can we afford not to?”

2. The Real Bottleneck Isn’t the Model, But Your Network.

The break-even math in the paper assumes your infrastructure can handle the load. That assumption is doing a lot of heavy lifting. What happens when you’re running multiple real-time inference workloads across a distributed network? Can your network handle the east-west traffic without becoming the bottleneck? What about power, cooling, and GPU utilization at scale? These are the hard, unsexy questions that determine whether your on-prem AI strategy succeeds or fails.

3. Security and Sovereignty are No Longer Abstract Concerns.

The moment you bring your AI workloads on-premise, your security posture changes. Data sovereignty is no longer a checkbox on a compliance form; it’s a physical reality you are responsible for. You need a zero-trust architecture that can secure data in motion and at rest, with full observability across every layer of the stack. This is where the enterprise-grade experience of companies like Cisco becomes non-negotiable.

The Strategic Imperative for Executives

If your AI strategy is still just “pick the best API,” you are operating on outdated information. The economics have shifted. Here’s what you need to do:

- Run the Numbers for Your Own Workloads. Use the framework from the paper to model your own usage patterns. What is your actual cost per token? What is your projected volume over the next 12-24 months? The answer will likely surprise you.

- Audit Your Infrastructure Readiness. Don’t just assume your network can handle it. Conduct a thorough assessment of your network capacity, power and cooling infrastructure, and security posture. Identify the gaps before you invest in a single GPU.

- Embrace the Hybrid Reality. For most enterprises, the future is not all-cloud or all-on-prem. It’s a hybrid model where you use APIs for burst capacity and experimentation, while bringing your steady, high-volume, and sensitive workloads in-house. Your infrastructure needs to be able to support this hybrid reality seamlessly and securely.

The Bottom Line

The debate is over. On-premise AI is not just for the hyperscalers anymore. It’s a financially viable and strategically critical option for a growing number of enterprises. The economics of AI have shifted. Make sure your infrastructure has shifted with them.

Take the Next Step

Understanding these principles is the first step. Putting them into action is what will set you apart.

AI Consulting for Your Business: If you’re ready to move beyond the hype and start implementing a real AI strategy, my team and I can help. We work with organizations to navigate the complexities of AI adoption, from process optimization to building custom AI agents. Let’s discuss your AI goals by scheduling a consulting call together.

If you’re interested in a custom AI workshop for your business or in your city, please reach out to me directly to start a conversation.

About Jason

Jason Fleagle is a Chief AI Officer and Growth Consultant working with global brands to help with their successful AI adoption and management. He is also a writer, entrepreneur, and consultant specializing in tech, marketing, and growth. He helps humanize data—so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 500 AI projects & tools, and frequently conducts training workshops to help companies understand and adopt AI. With a strong background in digital marketing, content strategy, and technology, he combines technical expertise with business acumen to create scalable solutions. He is also a content creator, producing videos, workshops, and thought leadership on AI, entrepreneurship, and growth. He continues to explore ways to leverage AI for good and improve human-to-human connections while balancing family, business, and creative pursuits.

You can learn more about Jason on his website here.

You can learn more about our top AI case studies here on our website.

Learn more about my AI resources here on my youtube channel.

And check out my AI online course.

Sources:

[1] Pan, G., Chodnekar, V., Roy, A., & Wang, H. (2025). A Cost-Benefit Analysis of On-Premise Large Language Model Deployment: Breaking Even with Commercial LLM Services. arXiv preprint arXiv:2509.18101. https://arxiv.org/abs/2509.18101