It has been a monumental few weeks in the world of artificial intelligence, with significant developments spanning geopolitics, enterprise software, consumer applications, and developer tooling. The pace of innovation is not just accelerating; it is broadening, touching every facet of the digital economy. From a major escalation in the US-China tech rivalry to fundamental shifts in how users interact with AI and build applications, this week’s updates signal a new phase of AI integration and competition.

This edition of AI Pathfinder breaks down the ten most significant developments you might have missed and analyzes what they mean for your organization’s strategy and governance.

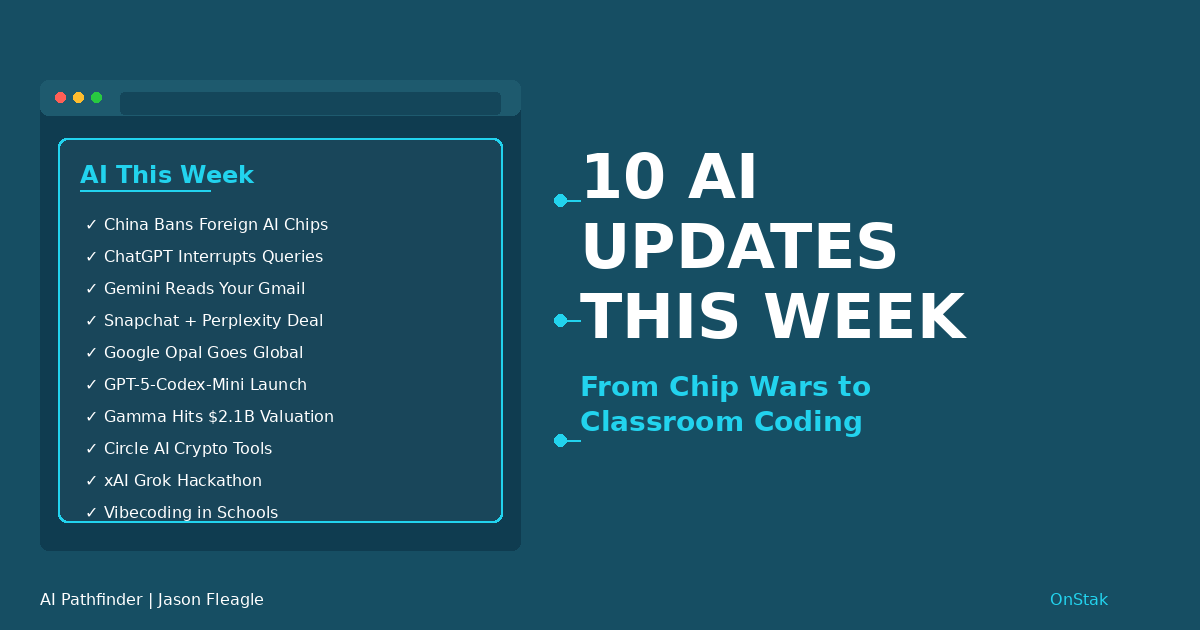

TLDR – My Top 10 AI Updates:

1. China Bans Foreign AI Chips: State-funded data centers are now mandated to use only domestic AI chips, escalating the global tech rivalry.

2. ChatGPT Interrupts Itself: Users can now interrupt long-running queries to add context or change direction without restarting.

3. Gemini Reads Your Workspace: Gemini Deep Research can now access and integrate data from your Gmail, Google Drive, and Chat.

4. Snapchat Bets on Perplexity: A $400M deal makes Perplexity the default AI search engine for Snapchat’s massive user base starting in 2026.

5. Google’s Opal Goes Global: The no-code AI app builder (“vibecoding”) has expanded from 15 to over 160 countries.

6. OpenAI Launches GPT-5-Codex-Mini: A more cost-efficient coding model is now available, increasing accessibility and usage limits.

7. Gamma Hits $2.1B Valuation: The AI presentation platform reached $100M in annual revenue with just 50 employees, showcasing incredible capital efficiency.

8. Circle Unveils AI Crypto Tools: New AI tools make it easier for developers to integrate USDC payments and other blockchain features.

9. xAI Hosts Grok Hackathon: An exclusive hackathon will grant developers early access to the next generation of Grok models.

10. Lovable Brings Vibecoding to Schools: A partnership with Imagi and OpenAI is bringing professional-grade AI development tools into the classroom.

1. China Mandates Domestic AI Chips in State Data Centers

In a significant move to accelerate its technological self-sufficiency, the Chinese government has issued a sweeping directive banning the use of foreign-made AI chips in new data center projects that receive any level of state funding. The mandate, which applies retroactively to some early-stage projects, directly targets major US chipmakers like Nvidia, AMD, and Intel, whose AI accelerators have dominated the market. This policy ensures that China’s growing government-backed AI infrastructure will be built on a foundation of domestically produced hardware.

Why It Matters: This is more than a procurement guideline with a clear escalation of the global technology rivalry. By forcing a decoupling in a critical infrastructure layer, China is creating a protected market for its domestic chipmakers and reducing its reliance on Western technology. For global AI companies, this signals the potential fragmentation of the AI hardware market into distinct geopolitical spheres of influence.

Governance Implications: Organizations with operations in China must now navigate a bifurcated hardware landscape. This raises critical questions about supply chain resilience, data sovereignty, and the interoperability of AI models trained on different hardware stacks. Companies will need to develop strategies for managing AI workloads across these increasingly divergent ecosystems.

2. ChatGPT Learns to Take Feedback on the Fly

OpenAI has rolled out a highly requested feature for ChatGPT: the ability to interrupt a query mid-response. Users engaged in long-running tasks, such as Deep Research or complex queries to GPT-5 Pro, can now stop the model, provide additional context or corrections, and have it adjust its output without losing progress or starting over. This transforms the interaction from a linear, one-shot prompt into a more dynamic and iterative dialogue.

Why It Matters: This feature provides a significant boost to user control and efficiency. Previously, a slight misdirection in a complex query would require a complete restart, wasting time and compute resources. Real-time prompt adjustment allows for more precise and nuanced exploration of topics, making AI a more effective partner in deep research and creative problem-solving.

Governance Implications: From a governance perspective, this enhancement promotes more responsible AI use. By giving users finer-grained control over the generation process, it reduces the likelihood of generating irrelevant or unintended content and encourages a more collaborative human-in-the-loop workflow.

3. Gemini Deep Research Gains Access to Your Workspace

Google has integrated its Workspace suite directly into Gemini Deep Research, allowing the AI to access and synthesize information from a user’s Gmail, Google Drive (including Docs, Sheets, and PDFs), and Google Chat. Now available to all desktop users, this feature enables Gemini to combine insights from private, internal documents with live web data to produce comprehensive reports. For example, a user could ask for a market analysis that cross-references public competitor data with internal strategy documents and relevant email threads.

Why It Matters: This represents a major step toward a truly personalized and context-aware AI assistant. By bridging the gap between public information and private knowledge, Gemini can perform tasks that were previously impossible for a general-purpose AI. However, it also marks a new frontier in data privacy.

Governance Implications: While the feature requires explicit user permission, the act of granting an AI access to an organization’s entire corpus of emails and documents is a significant governance decision. This raises critical questions about data security, access control, and the potential for inadvertent exposure of sensitive information. Organizations must establish clear policies on the use of such features and ensure employees understand the privacy implications.

4. Snapchat Taps Perplexity as Its Default AI in $400M Deal

In a landmark partnership, Snap Inc. has announced a $400 million deal to make Perplexity the default AI search engine for all Snapchat users, beginning in early 2026. The integration will bring Perplexity’s conversational, citation-backed answers directly into the Snapchat app, giving the AI startup access to Snap’s massive and highly engaged user base, particularly among younger demographics. The deal, which involves a combination of cash and equity, caused Snap’s stock to surge over 15%.

Why It Matters: This is the first time a major social media platform has outsourced its core AI search function to a third-party startup. It provides Perplexity with an unparalleled distribution channel to capture the next generation of AI users, potentially establishing it as the go-to AI for Gen Z. For Snap, it’s a capital-efficient way to deploy a best-in-class AI experience without the massive cost of in-house model development.

Governance Implications: The partnership creates a complex web of data sharing and content moderation responsibilities. Clear governance must be established to handle user data privacy, ensure the accuracy and safety of AI-generated answers for a young audience, and define liability for incorrect or harmful information.

5. Google’s No-Code AI Builder ‘Opal’ Expands to 160+ Countries

Google Labs has dramatically expanded the availability of Opal, its no-code AI application builder, from just 15 to over 160 countries. Opal allows users to create “mini-apps” using natural language, a practice known as “vibecoding.” These apps can automate research, run marketing campaigns, or analyze data without the user writing a single line of code. This global rollout makes powerful AI development tools accessible to a much wider audience.

Why It Matters: The expansion of Opal represents a significant step in the democratization of AI. By removing the barrier of coding, Google is empowering subject-matter experts, entrepreneurs, and creatives to build their own custom AI solutions. This could unleash a wave of innovation as people closest to a problem can now build tools to solve it.

Governance Implications: As millions of new creators begin building AI apps, new governance challenges emerge. Organizations will need policies for the use of no-code tools to ensure that employee-built apps are secure, compliant, and aligned with company standards. Quality control and vetting processes for these rapidly created applications will become essential.

6. OpenAI Launches Cost-Efficient GPT-5-Codex-Mini

OpenAI has released GPT-5-Codex-Mini, a more compact and cost-effective version of its powerful coding model. The new model allows for four times more usage with only a minor trade-off in performance, making it ideal for more straightforward coding tasks. In conjunction with the release, OpenAI increased the rate limits for all paid tiers, giving Plus, Business, and Edu users 50% more usage, with Pro and Enterprise tiers receiving priority processing.

Why It Matters: By lowering the cost and increasing access, OpenAI is making AI-powered coding assistance a more ubiquitous and integral part of the development workflow. This move will likely accelerate the adoption of AI coding tools across the industry, from individual hobbyists to large enterprise teams, boosting productivity and shortening development cycles.

Governance Implications: The proliferation of AI-generated code requires robust governance frameworks. Organizations must implement standards for code quality, security scanning, and intellectual property reviews for all AI-assisted development. Developers need to be trained on how to effectively use these tools while still maintaining accountability for the final code.

7. AI Presentation Platform Gamma Hits $2.1B Valuation

Gamma, an AI-powered platform for creating presentations and documents, announced it has raised a $68 million Series B at a $2.1 billion valuation. What makes this remarkable is that the company has reached $100 million in annual recurring revenue (ARR) with a lean team of just 50 employees. This translates to an astonishing $2 million in ARR per employee, showcasing a new level of capital efficiency driven by AI.

Why It Matters: Gamma’s success is a powerful case study in the disruptive potential of AI-native companies. By leveraging AI at its core, the company has achieved a level of productivity and scale that was previously unimaginable, posing a genuine threat to incumbents like Microsoft PowerPoint. This model of hyper-efficient, AI-driven growth is likely to be replicated across other software categories.

Governance Implications: As AI-generated content becomes more common in business communications, organizations need to establish standards for its use. This includes guidelines on branding, factual accuracy, and disclosure of AI assistance. The ease of creating visually polished but potentially superficial content requires a renewed focus on substance and critical thinking.

8. Circle Releases AI Tools for Crypto Integration

Circle, the issuer of the USDC stablecoin, has launched a new AI toolkit designed to simplify the integration of its products for developers. The toolkit includes an AI chatbot and an MCP (Model Context Protocol) server that can generate code for integrating USDC, the Cross-Chain Transfer Protocol (CCTP), wallets, and smart contracts directly within a browser or IDE. The goal is to reduce the time it takes to build crypto-enabled applications from days to minutes.

Why It Matters: This initiative lowers the technical barrier to entry for building with cryptocurrency, potentially accelerating the adoption of digital dollars in a wide range of applications. By enabling AI agents to interact with and trigger USDC payments, Circle is laying the groundwork for a future of autonomous, machine-to-machine commerce.

Governance Implications: The use of AI to generate financial code introduces significant risks. Strong governance is required to ensure the security and reliability of this code, including mandatory smart contract audits and compliance with financial regulations. Automated payment systems run by AI agents will require robust risk management and oversight mechanisms to prevent errors and abuse.

9. xAI Announces Hackathon with Early Grok Model Access

xAI is hosting its first-ever 24-hour hackathon on December 6-7, offering participants exclusive early access to upcoming Grok models and the X (formerly Twitter) API. The in-person event, held in the San Francisco Bay Area, is being marketed as an “ultimate arena for the most hardcore product builders.” This move is a clear attempt by xAI to build a dedicated developer community around its technology.

Why It Matters: Developer mindshare is a critical competitive advantage in the AI race. By offering early access to its next-generation models, xAI is creating a pathway for developers to build applications and integrations that could drive adoption of its platform. This is a strategic play to establish Grok as a viable alternative to models from OpenAI, Anthropic, and Google.

Governance Implications: Providing early access to powerful, unreleased models comes with responsibility. xAI will need to have clear terms of use and a framework for responsible disclosure to manage the risks associated with misuse. Developers participating will need to consider the ethical implications of the applications they build, especially when combined with the real-time data from the X platform.

10. Lovable and Imagi Bring “Vibecoding” to K-12 Classrooms

In a partnership with OpenAI and Imagi, the AI coding platform Lovable is bringing its professional-grade “vibecoding” tools into K-12 classrooms. Backed by a $1 million credit grant from OpenAI, the initiative provides schools with free access to Lovable through Imagi’s educational platform. The program includes a 60-minute “Hour of AI” lesson plan that allows students to build functional web apps using natural language prompts, with no prior coding experience required.

Why It Matters: This partnership democratizes access to cutting-edge AI development tools, introducing the next generation of creators to AI-powered software development. By putting the same tools used by Fortune 500 companies into the hands of students, the initiative aims to inspire and equip young learners for a future where AI is a fundamental tool for creation.

Governance Implications: Bringing powerful AI tools into the classroom requires careful governance. This includes ensuring student data privacy (in compliance with regulations like COPPA and FERPA), providing age-appropriate safeguards, and training teachers to guide students effectively. It also raises important pedagogical questions about how to balance the power of AI assistance with the need to teach foundational computer science principles.

What to Watch Next

These recent AI developments set the stage for several key trends to watch. The US-China tech rivalry will continue to intensify, forcing companies to make difficult strategic choices. The battle for platform dominance will heat up as AI becomes deeply embedded in social media and productivity suites. Finally, the democratization of AI development, through both no-code tools and educational initiatives, will continue to lower the barrier to entry, unleashing both innovation and new governance challenges.

What do you think about these updates? Any that make you excited or worried? Let me know.

Please feel free to drop a comment or DM me if you have a question.

And remember to keep moving forward!

About OnStak

OnStak specializes in comprehensive AI implementation across four core expertise areas: AI/Data for intelligent knowledge management, AI/Edge for distributed operational intelligence, AI/Performance for optimized system efficiency, and AI/Migrations for seamless technology integration. Our proven methodology helps business and technology leaders achieve operational transformation while maximizing return on investment.

View our recent case studies and projects here

About Jason Fleagle

Jason Fleagle is the Chief AI Architect at OnStak, and is also a writer, entrepreneur, and consultant specializing in tech, AI, and growth. He helps humanize data—so every growth decision an organization makes is rooted in clarity and confidence. Jason has helped lead the development and delivery of over 150 AI applications, and frequently conducts training workshops to help companies understand and adopt AI. With a strong background in digital marketing, content strategy, and technology, he combines technical expertise with business acumen to create scalable solutions. He is also a content creator, producing videos, workshops, and thought leadership on AI, entrepreneurship, and growth. He continues to explore ways to leverage AI for good and improve human-to-human connections while balancing family, business, and creative pursuits.

Looking for AI Growth?

Let’s Talk About Your AI Goals!

What would you do if you could determine the top AI use cases or opportunities for you and your team?

We can help you go from surviving to thriving – with done-for-you business growth implementations.

You can learn more about Jason on his website here.

You can learn more about OnStak here.

You can learn more about our case studies here on our website.

Learn more about my AI resources here on my youtube channel.

And check out my AI online course.